Consensus is growing that a major market "correction" is coming: while some infrastructure operators are highly exposed, others may stand to benefit.

filters

Explore All Topics

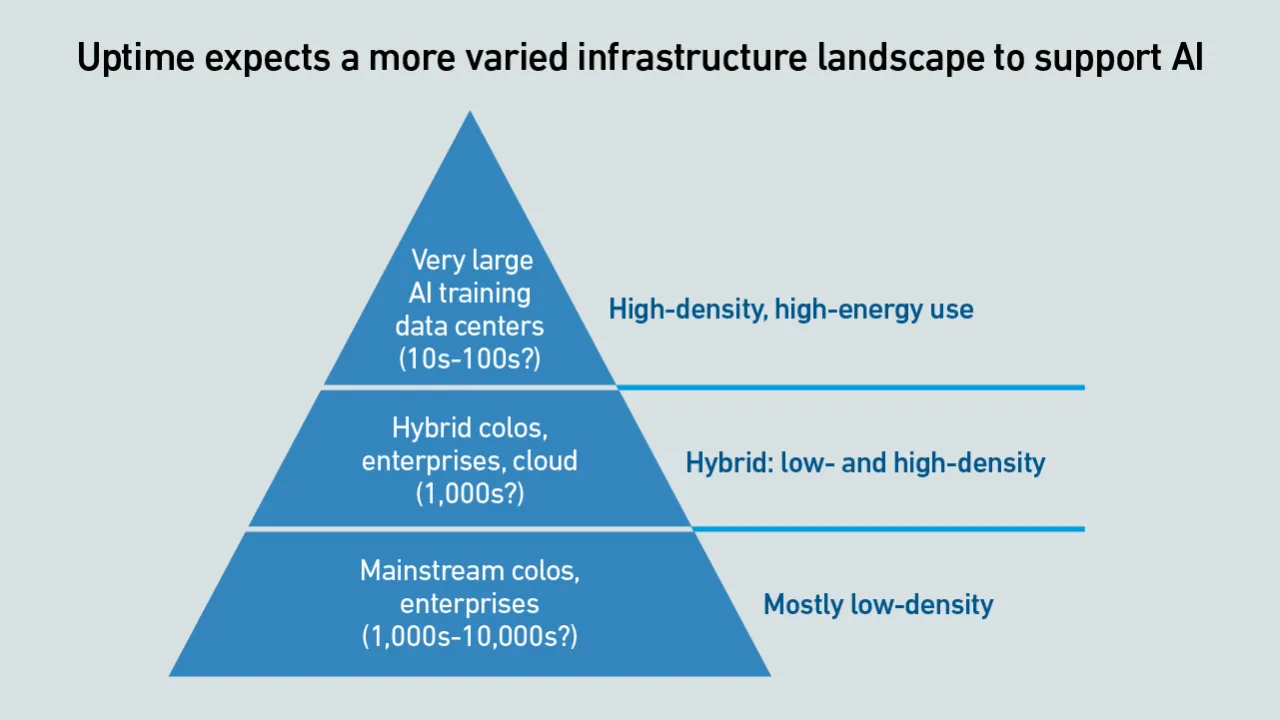

As IT organizations embrace AI, data center facilities and colocation providers need to plan to deploy the supporting infrastructure - despite many uncertainties. Most, however, are still moving cautiously.

Research into neuromorphic computing could lead to the creation of smaller, faster and more energy-efficient AI accelerators. This would have a transformative impact on digital infrastructure.

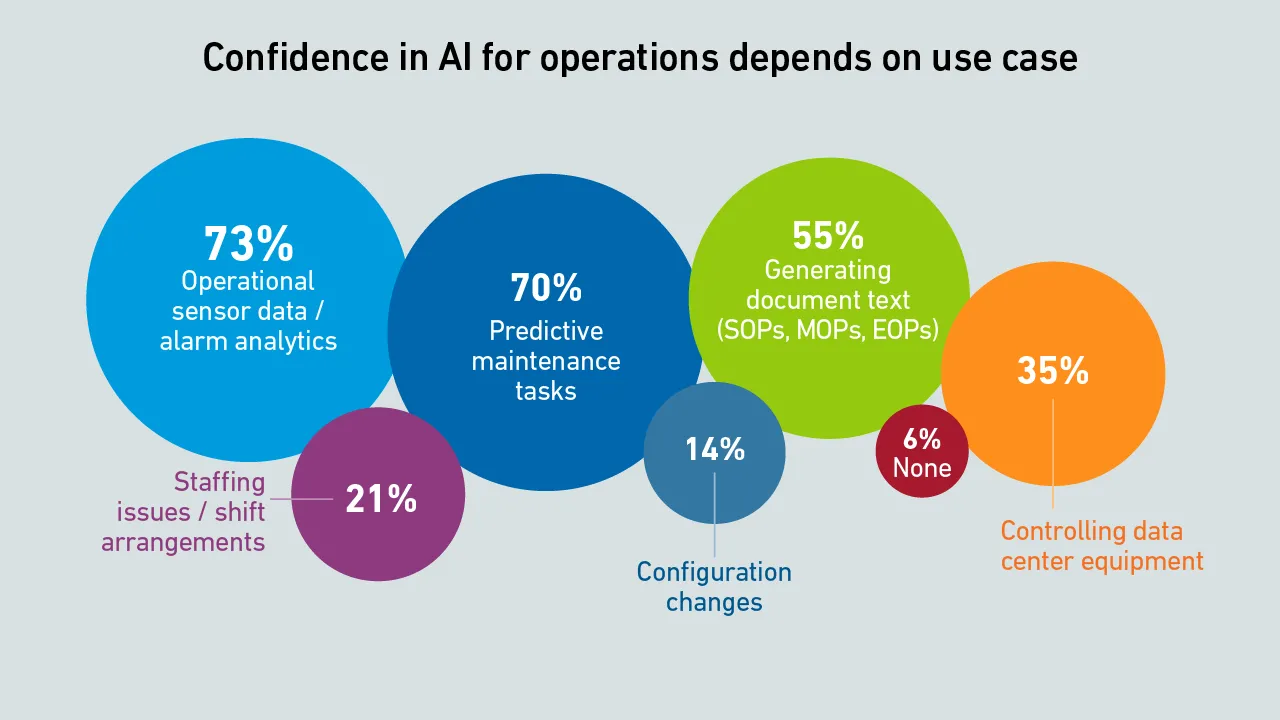

AI is changing how data centers operate, what began with algorithmic fine-tuning of chilled-water plants is now moving into the IT side of operations, closer to the load. But will operators ever trust AI enough to let it run the room?

Large-scale AI training is an application of supercomputing. Supercomputing experts at the Yotta 2025 conference agree that operators need to optimize AI training efficiency and develop metrics to account for utilized power.

By raising debt, building data centers and using colos, neoclouds shield hyperscalers from the financial and technological shocks of the AI boom. They share in the upside if demand grows, but are burdened with stranded assets if it stalls.

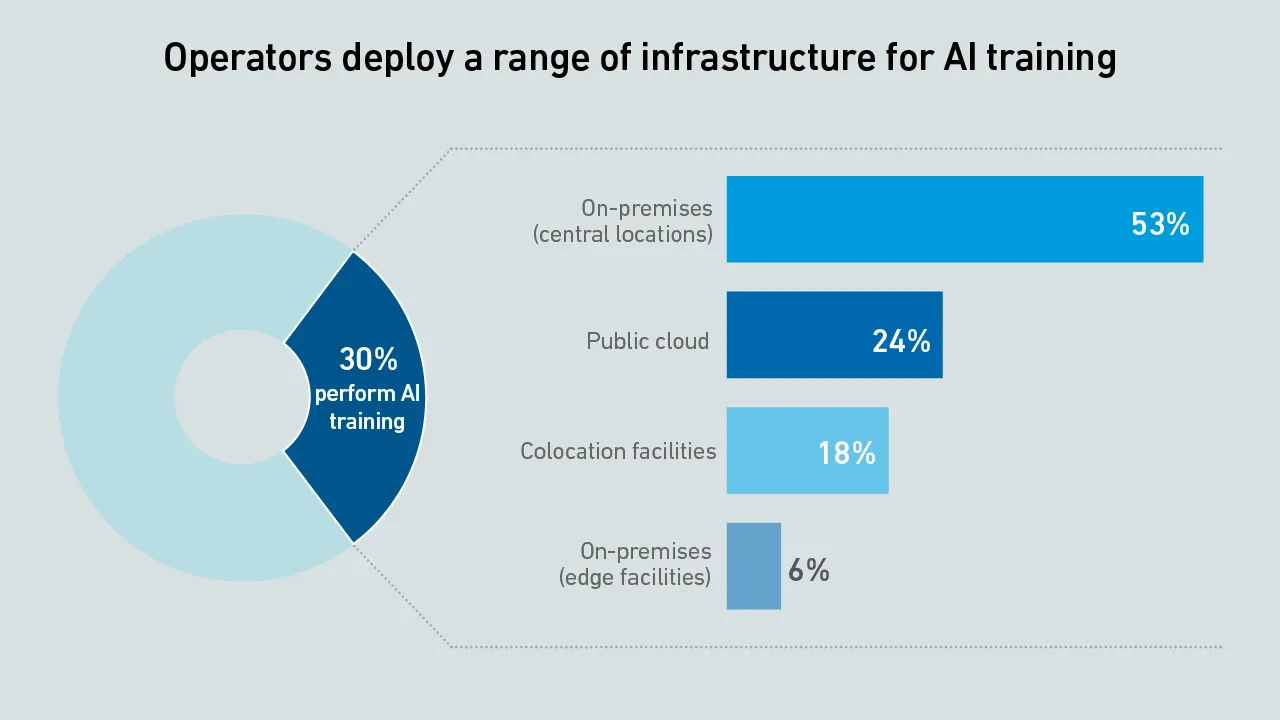

From on-prem AI to high-density IT, this webinar examined survey findings on how operators are preparing for what's next.

AWS has recently cut prices on a range of GPU-backed instances. These price reductions make it harder to justify an investment in dedicated AI infrastructure.

Several operators originally established to mine cryptocurrencies are now building hyperscale data centers for AI. How did this change happen?

The 15th edition of the Uptime Institute Global Data Center Survey highlights the experiences and strategies of data center owners and operators in the areas of resiliency, sustainability, efficiency, staffing, cloud and AI.

The data center industry is on the cusp of the hyperscale AI supercomputing era, where systems will be more powerful and denser than the cutting-edge exascale systems of today. But will this transformation really materialize?

AI training can strain power distribution systems and shorten hardware life - especially in data centers not built for dynamic workloads. Many operators may be underestimating these risks during design and capacity planning.

Training large transformer models is different from all other workloads - data center operators need to reconsider their approach to both capacity planning and safety margins across their infrastructure.

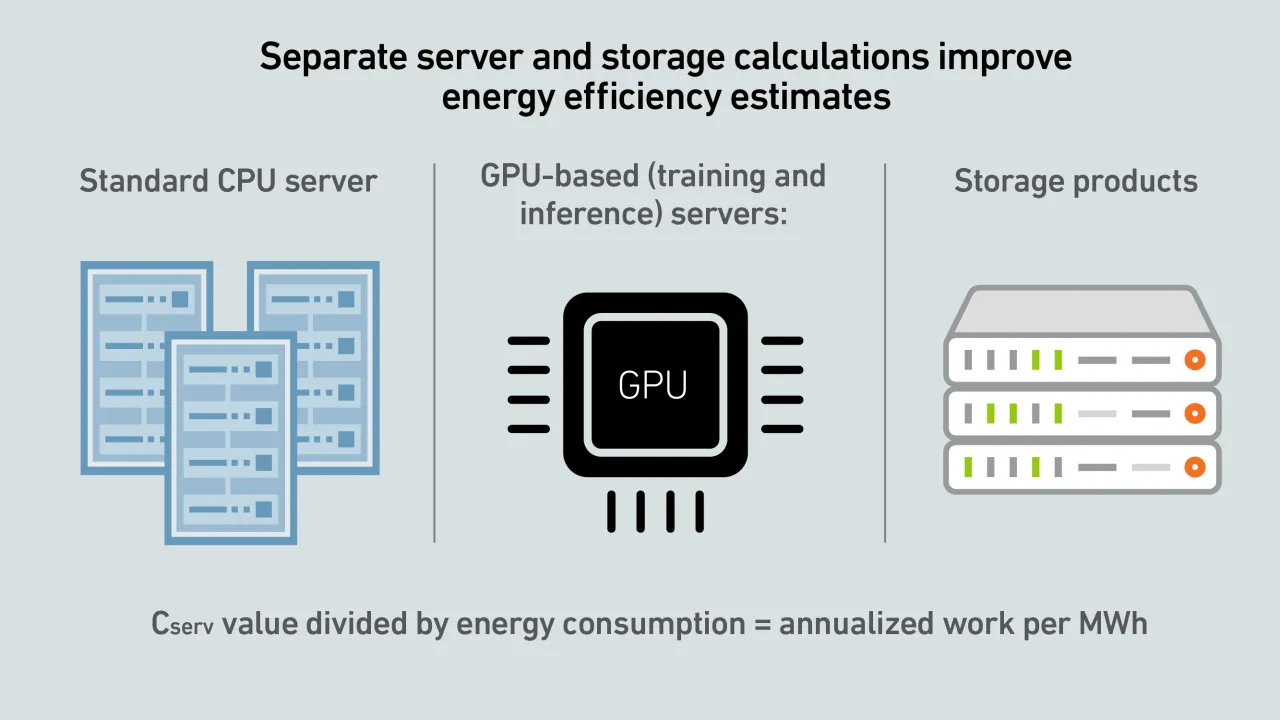

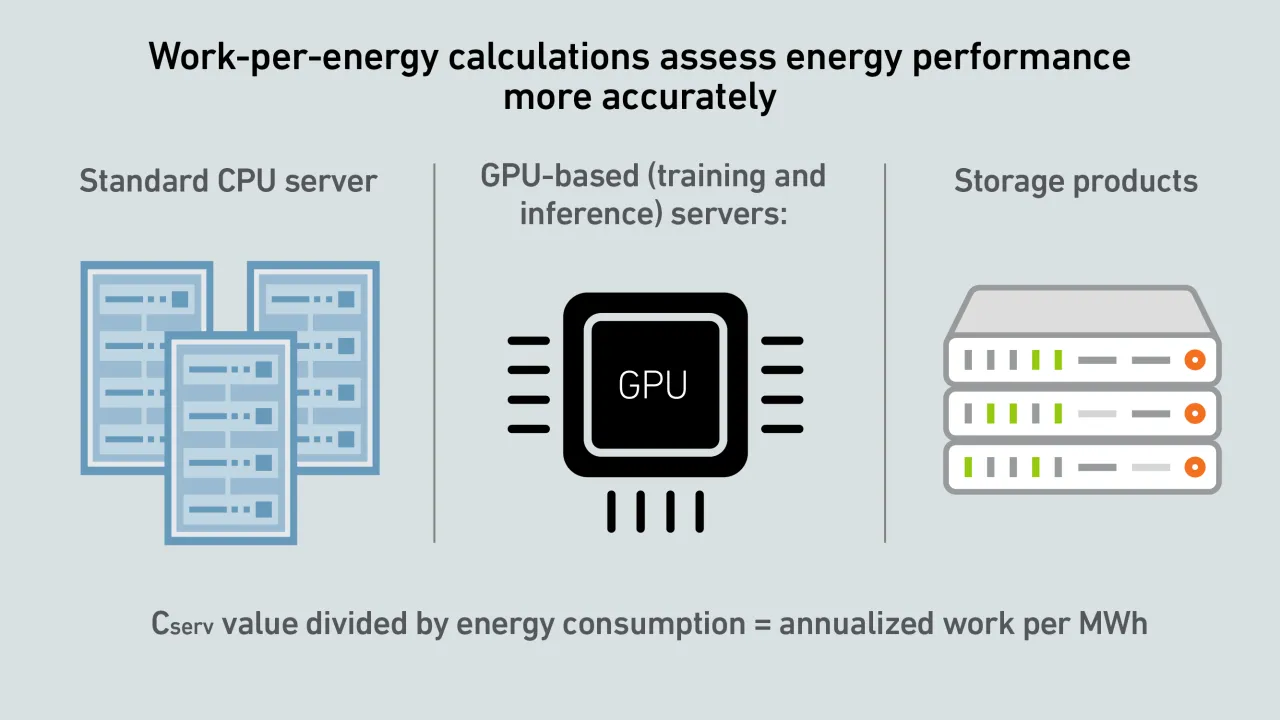

A report by Uptime's Sustainability and Energy Research Director Jay Dietrich merits close attention; it outlines a way to calculate data center IT work relative to energy consumption. The work is supported by Uptime Institute and The Green Grid.

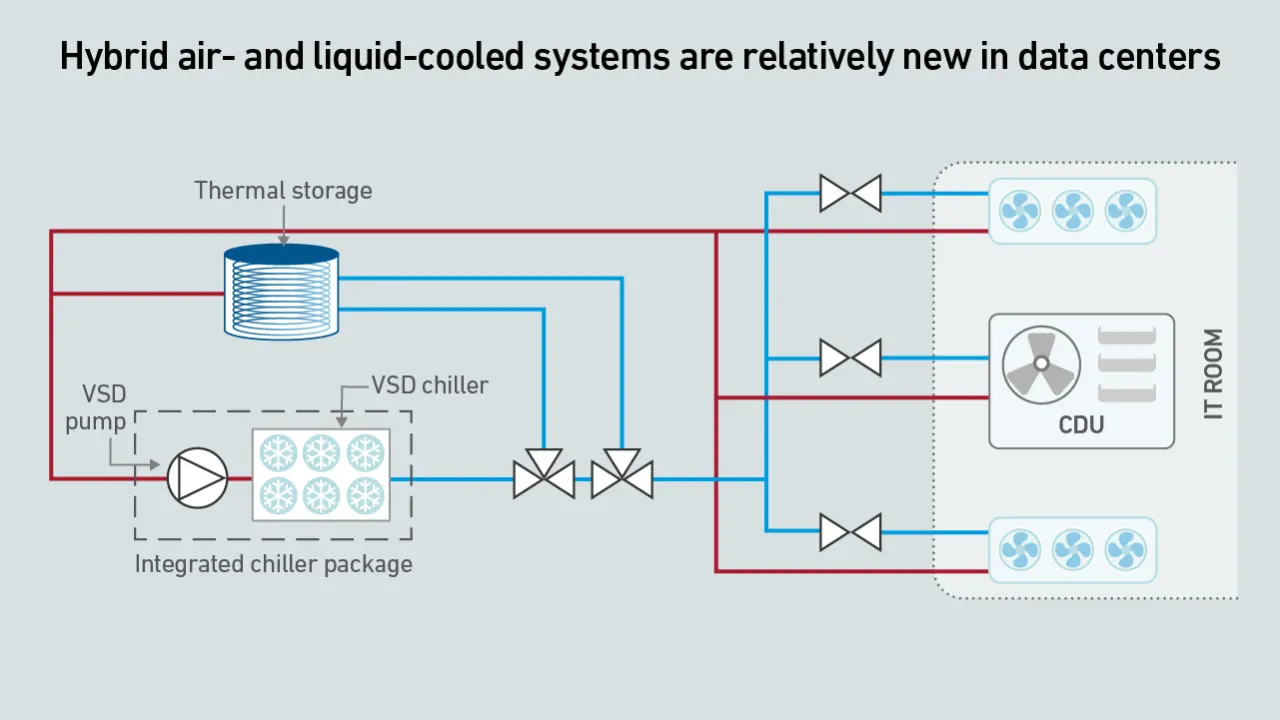

To meet the demands of unprecedented rack power densities, driven by AI workloads, data center cooling systems need to evolve and accommodate a growing mix of air and liquid cooling technologies.

Andy Lawrence

Andy Lawrence

Max Smolaks

Max Smolaks

Dr. Rand Talib

Dr. Rand Talib

Jacqueline Davis

Jacqueline Davis

Dr. Owen Rogers

Dr. Owen Rogers

Daniel Bizo

Daniel Bizo

Douglas Donnellan

Douglas Donnellan

Peter Judge

Peter Judge

Rose Weinschenk

Rose Weinschenk

Dr. Tomas Rahkonen

Dr. Tomas Rahkonen