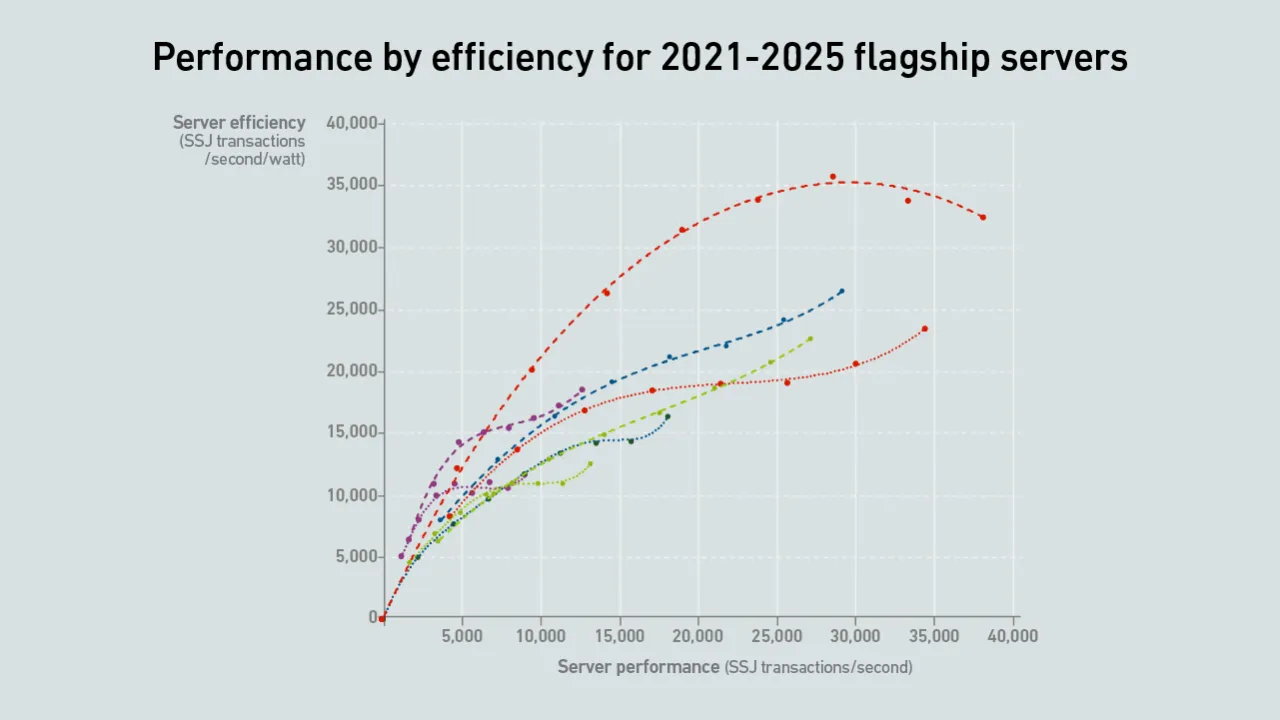

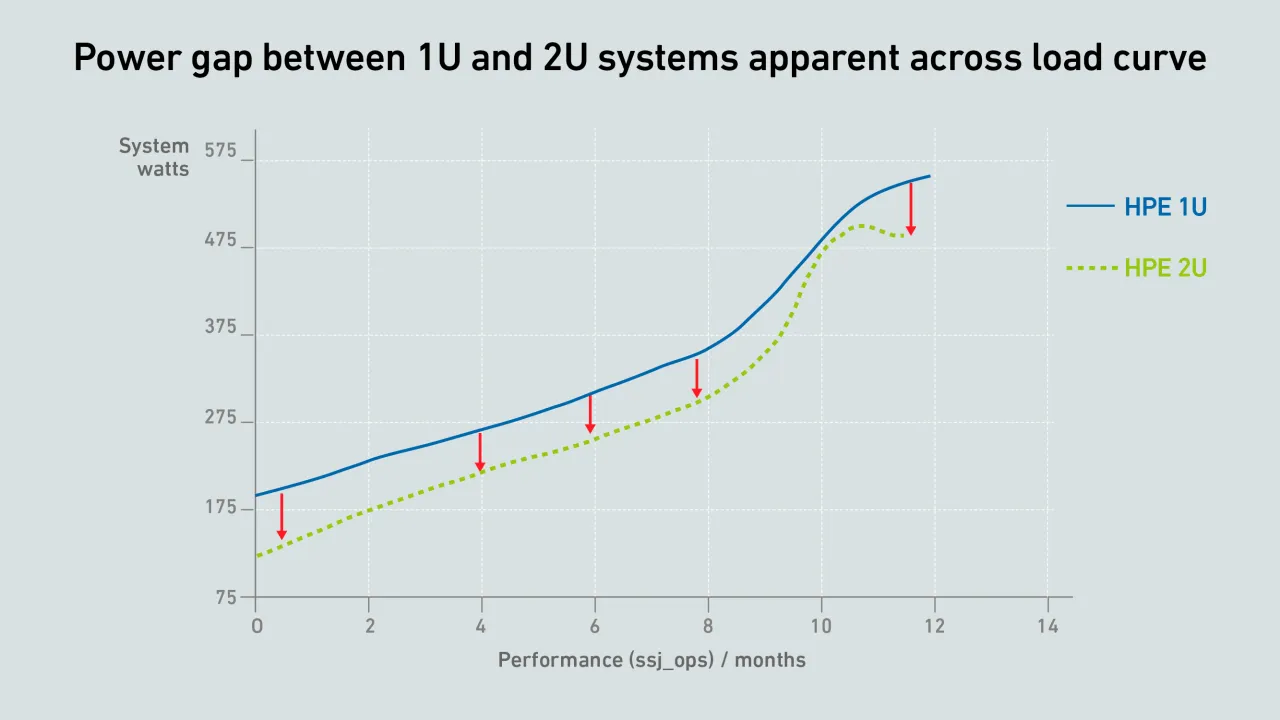

New flagship AMD and Intel servers with high core counts push performance boundaries while improving efficiencies. This report breaks down and visualizes improvements based on an analysis of SERT data.

filters

Explore All Topics

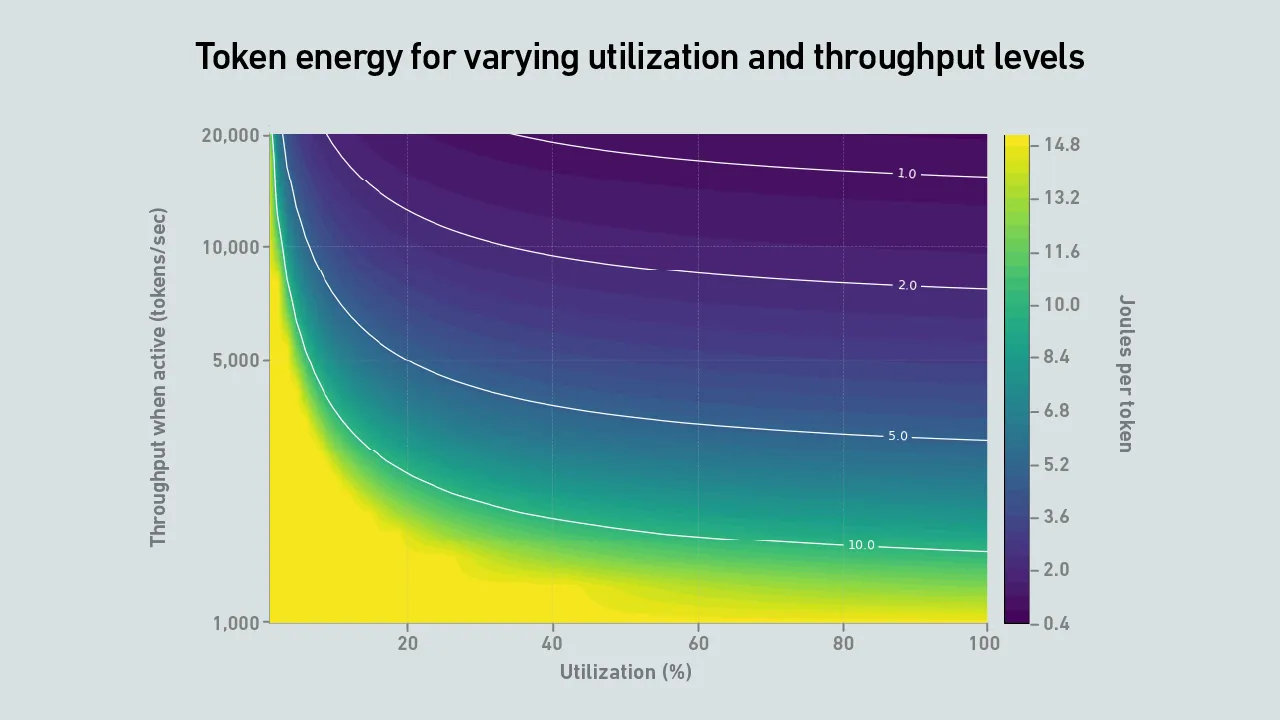

This tool — to accompany the Uptime report "The problem with energy per token" — explores how energy and carbon per token vary depending on throughput, utilization, power consumption and grid carbon intensity, even when using the same hardware.

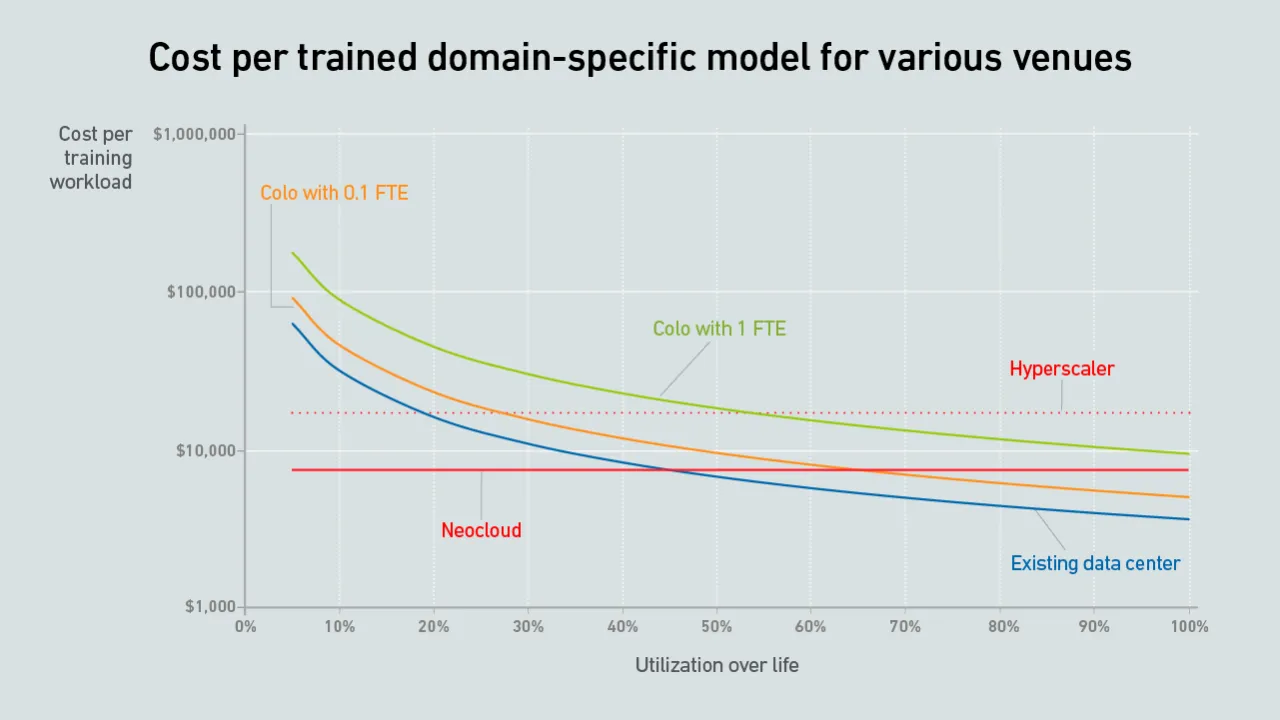

In AI model training, idle GPUs — not high prices — are the biggest driver of cost, with poor utilization quietly burning tens of thousands of dollars in wasted compute capacity.

The cost of AI training depends on venue choice and infrastructure utilization. This costing tool — to accompany the Uptime report "Where to deploy AI training" — calculates workload infrastructure costs for colo, cloud and enterprise data centers.

Benchmarks may produce impressive energy-per-token metrics, but real-world AI workloads are bursty; when throughput drops and GPUs sit idle, joules per token can increase. Do not size AI infrastructure for lab conditions — plan for demand.

An alert from the North American grid connection authority shows that data centers will be treated similarly to generation assets when requesting power connections, requiring operators to share more information and permit operational monitoring.

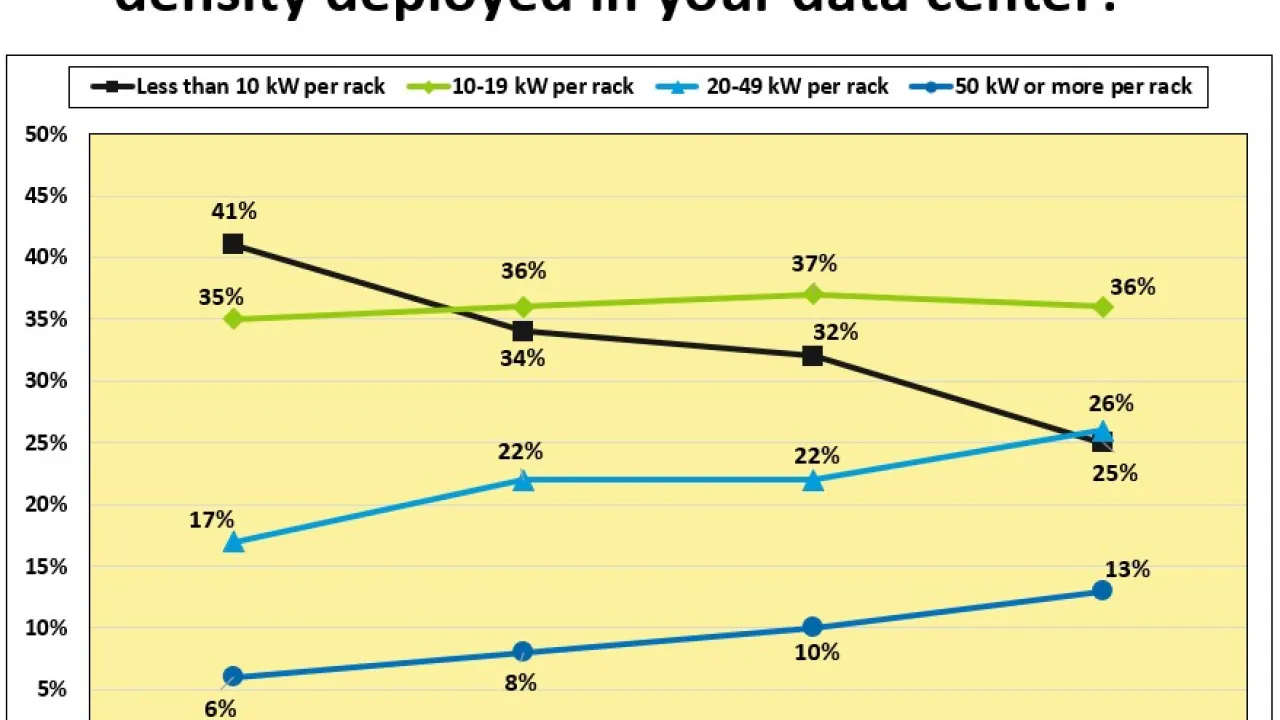

An exclusive focus on densification and DLC (as if they were inevitable) risks becoming tunnel vision that ignores costs and alternative choices. For IT infrastructure not fully transitioning to DLC, keeping densities moderate may make more sense.

When choosing whether to develop a brand new LLM or fine-tune an existing one, the second option often makes more sense. It can be more cost-effective and requires fewer IT and facility resources.

By integrating new natural gas electricity generation with carbon capture, operators can safeguard net-zero targets threatened by a reliance on fossil power — but initial adoption will be costly and limited to specific geographic locations.

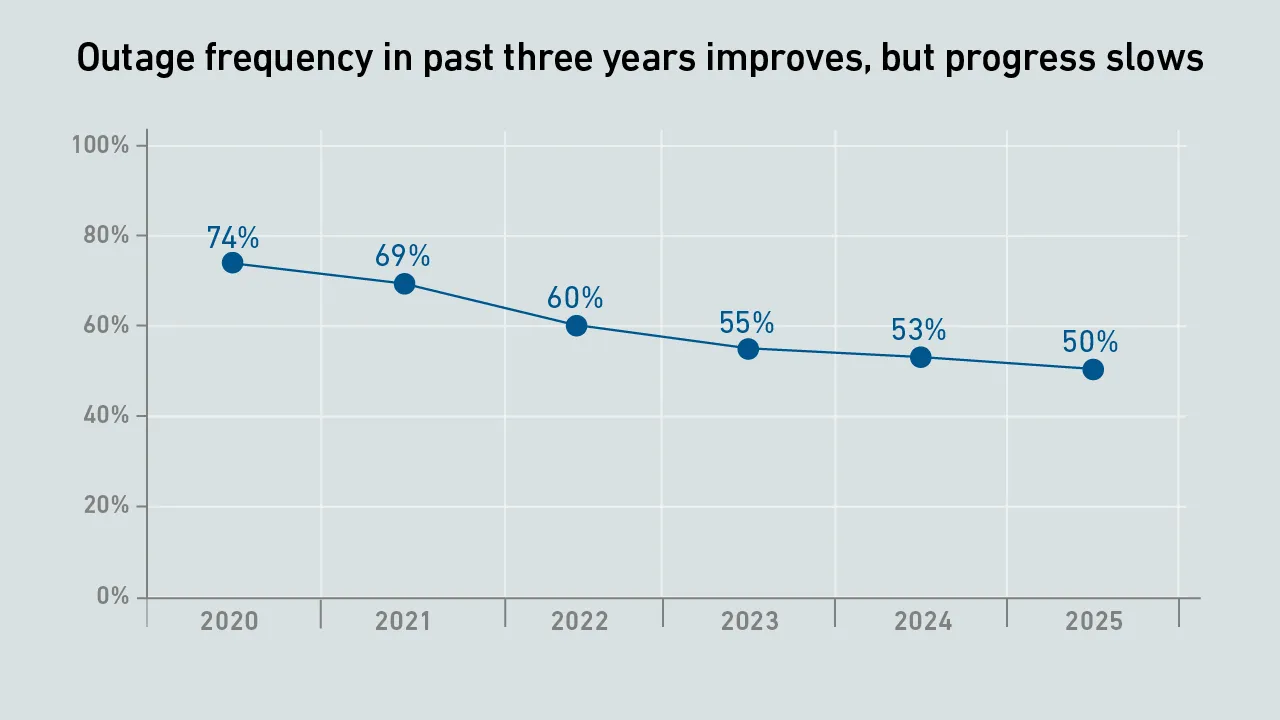

Even as meeting the demands of AI infrastructure intensifies, preventing outages remains a top priority for data center operators — yet failures still occur. This report analyzes recent Uptime data on outages — their causes, costs and consequences.

AI applications are becoming critical to enterprise operations, but service availability still varies sharply across providers. Inference services should be evaluated not only on model capability, but on operational maturity.

As US state legislatures face difficulties passing local bans on data centers, opponents are increasingly turning to new regulatory approaches. Data center operators will need to navigate this fragmented policy landscape to stay ahead of compliance.

A new generation of vendors is entering the market to offer prefabricated modular data centers — betting that surging demand for AI will give these facilities a competitive edge.

The 16th edition of the Uptime Institute Global Data Center Survey highlights the experiences and strategies of data center owners and operators in the areas of resiliency, sustainability, efficiency, staffing, cloud and AI. The attached data files…

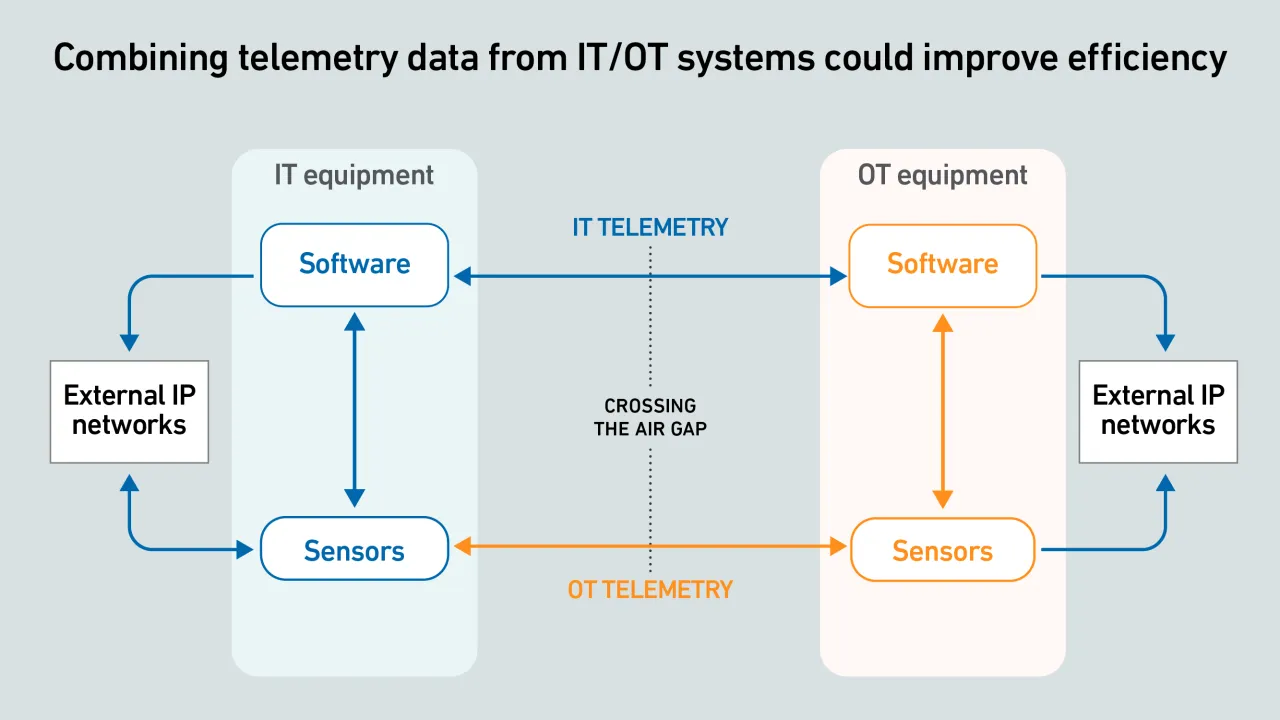

IT-OT equipment telemetry offers huge potential to improve visibility into live facility operations, but data exchange often fails because systems and protocols are incompatible.

Dr. Tomas Rahkonen

Dr. Tomas Rahkonen

Dr. Owen Rogers

Dr. Owen Rogers

Peter Judge

Peter Judge

Daniel Bizo

Daniel Bizo

Max Smolaks

Max Smolaks

Douglas Donnellan

Douglas Donnellan

Andy Lawrence

Andy Lawrence

Rose Weinschenk

Rose Weinschenk

Paul Carton

Paul Carton

Anthony Sbarra

Anthony Sbarra

Laurie Williams

Laurie Williams

John O'Brien

John O'Brien