A major outage at AWS's Virginia region took many global organizations offline. What can enterprises do to reduce or negate the impact of such widespread outages?

Dr. Owen Rogers

Dr. Owen Rogers is Uptime Institute’s Senior Research Director of Cloud Computing. Dr. Rogers has been analyzing the economics of cloud for over a decade as a chartered engineer, product manager and industry analyst. Rogers covers all areas of cloud, including AI, FinOps, sustainability, hybrid infrastructure and quantum computing.

orogers@uptimeinstitute.com

Latest Research

The US Cloud Act lets US authorities access cloud data stored overseas. Encryption offers protection only when keys are firmly controlled - creating challenges for enterprises but an opportunity for colocation providers.

By raising debt, building data centers and using colos, neoclouds shield hyperscalers from the financial and technological shocks of the AI boom. They share in the upside if demand grows, but are burdened with stranded assets if it stalls.

The remote nature of cloud computing makes it vulnerable to extraterritorial legal reach. Colocations, by contrast, only manage infrastructure - not data - shielding them from the same level of foreign governmental access.

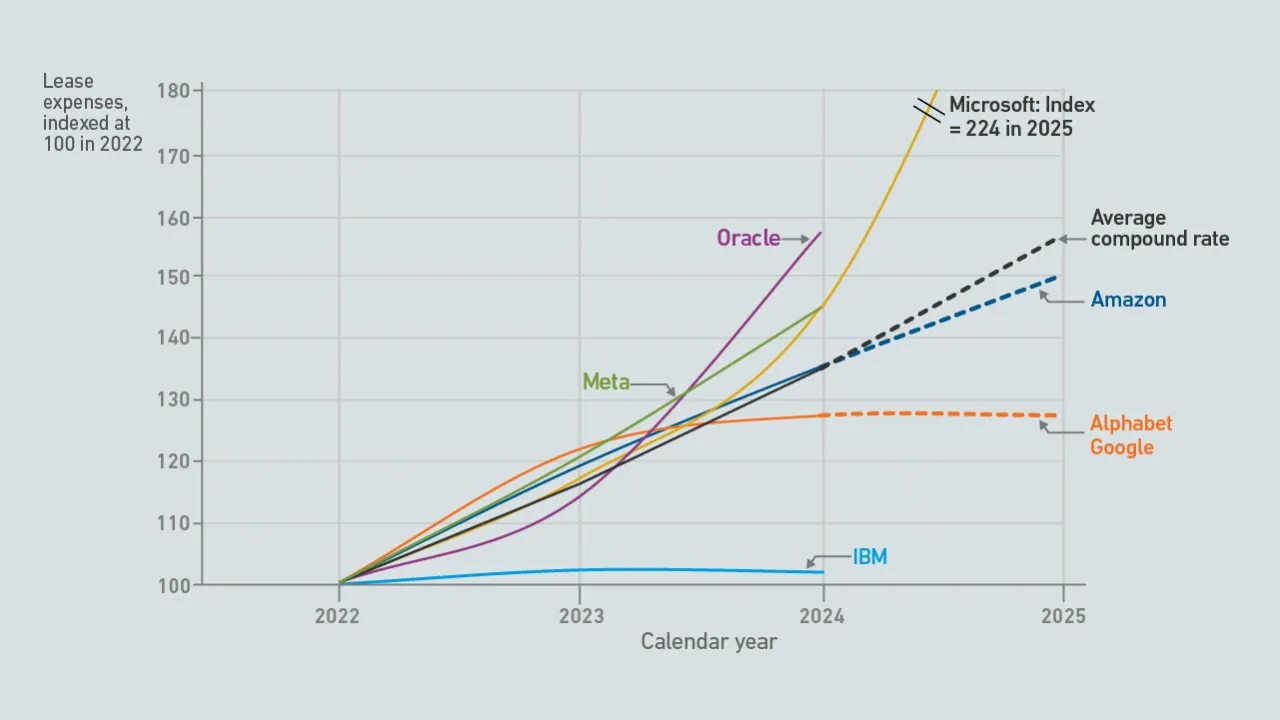

Financial data suggests that hyperscalers' use of colocation facilities has grown substantially over the past few years. Their investments in colocations also show no signs of slowing down.

AWS has recently cut prices on a range of GPU-backed instances. These price reductions make it harder to justify an investment in dedicated AI infrastructure.

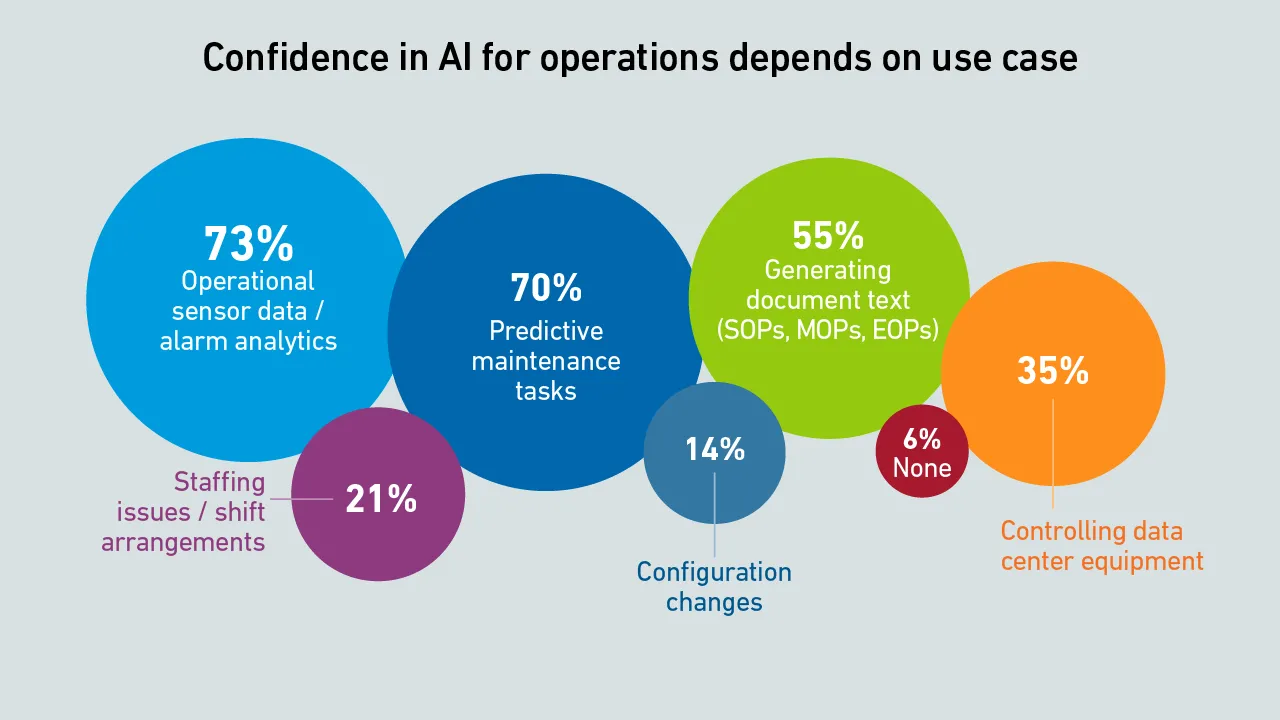

The 15th edition of the Uptime Institute Global Data Center Survey highlights the experiences and strategies of data center owners and operators in the areas of resiliency, sustainability, efficiency, staffing, cloud and AI.

The terms "retail" and "wholesale" colocation not only describe different types of colocation customers, but also how providers price and package their products, and the extent to which customers can specify their requirements.

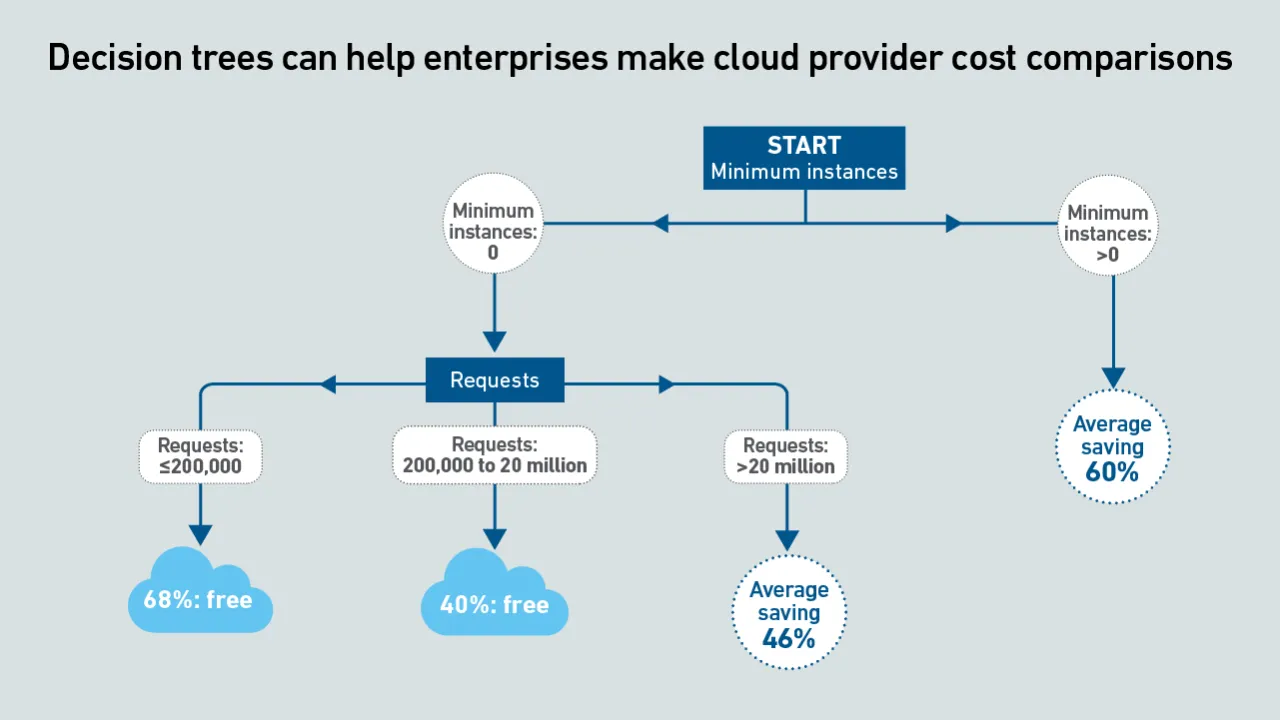

Serverless container services enable rapid, per-second scalability, which is ideal for AI inference. However, inconsistent and opaque pricing metrics hinder comparisons. This pricing tool compares the cost of services across providers.

Serverless container services enable rapid scalability, which is ideal for AI inference. However, inconsistent and opaque pricing metrics hinder comparisons. This report uses machine learning to derive clear guidance by means of decision trees.

Current geopolitical tensions are eroding some European organizations' confidence in the security of hyperscalers; however, moving away from them entirely is not practically feasible.

As AI workloads surge, managing cloud costs is becoming more vital than ever, requiring organizations to balance scalability with cost control. This is crucial to prevent runaway spend and ensure AI projects remain viable and profitable.

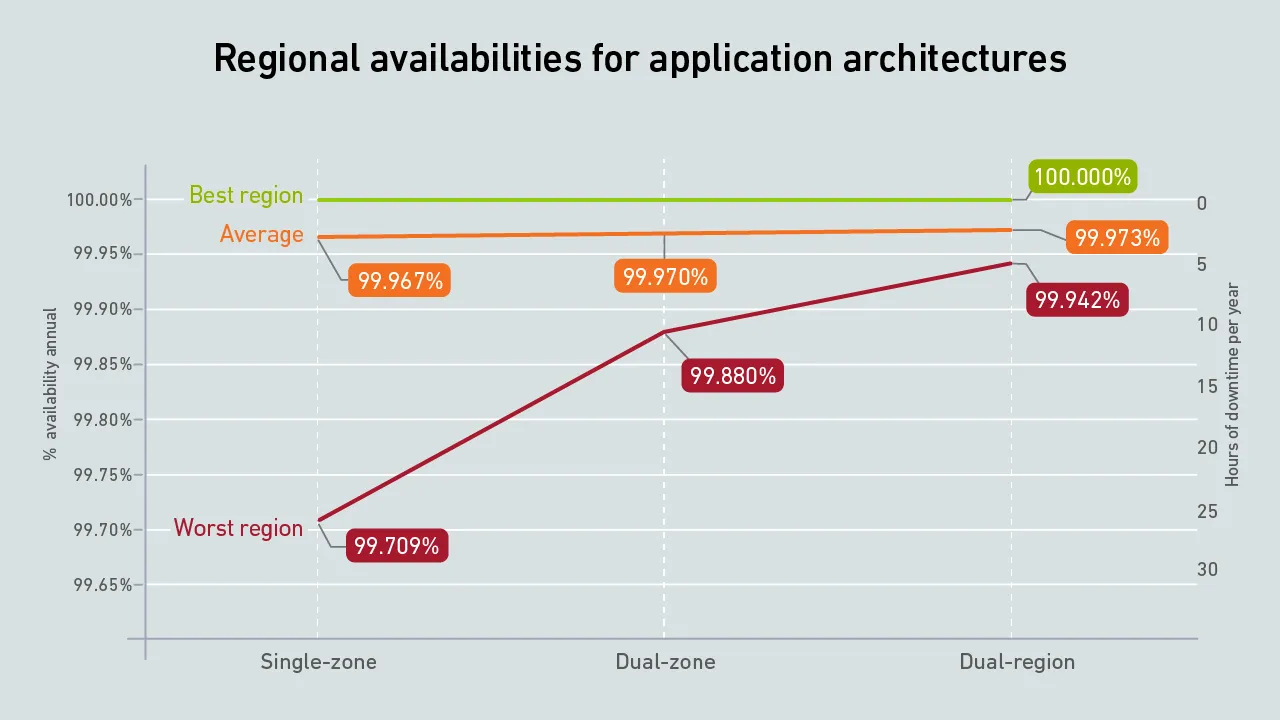

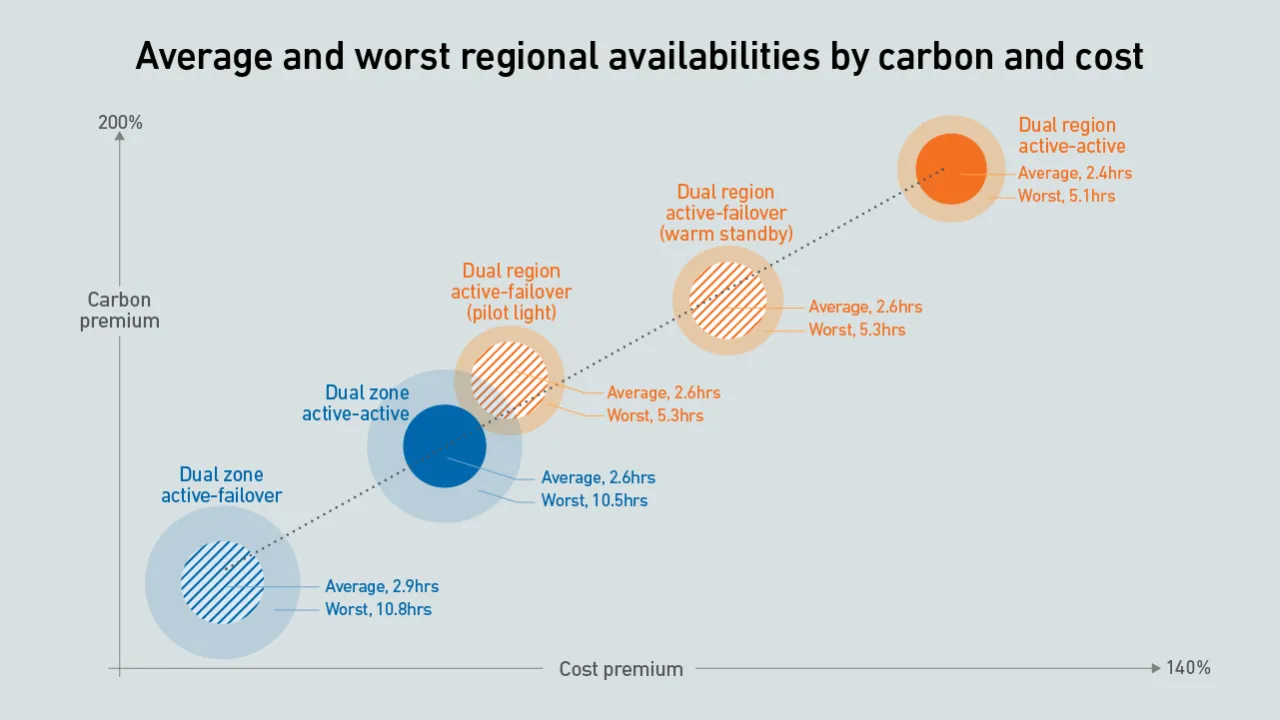

Organizations that architect resiliency into their cloud applications should expect a sharp rise in carbon emissions and costs. Some architectures provide a better compromise on cost, carbon and availability than others.

Preventing outages continues to be a central focus for data center owners and operators. While infrastructure design and resiliency frameworks have improved in many cases, the complexity of modern architectures continues to present new risks that…

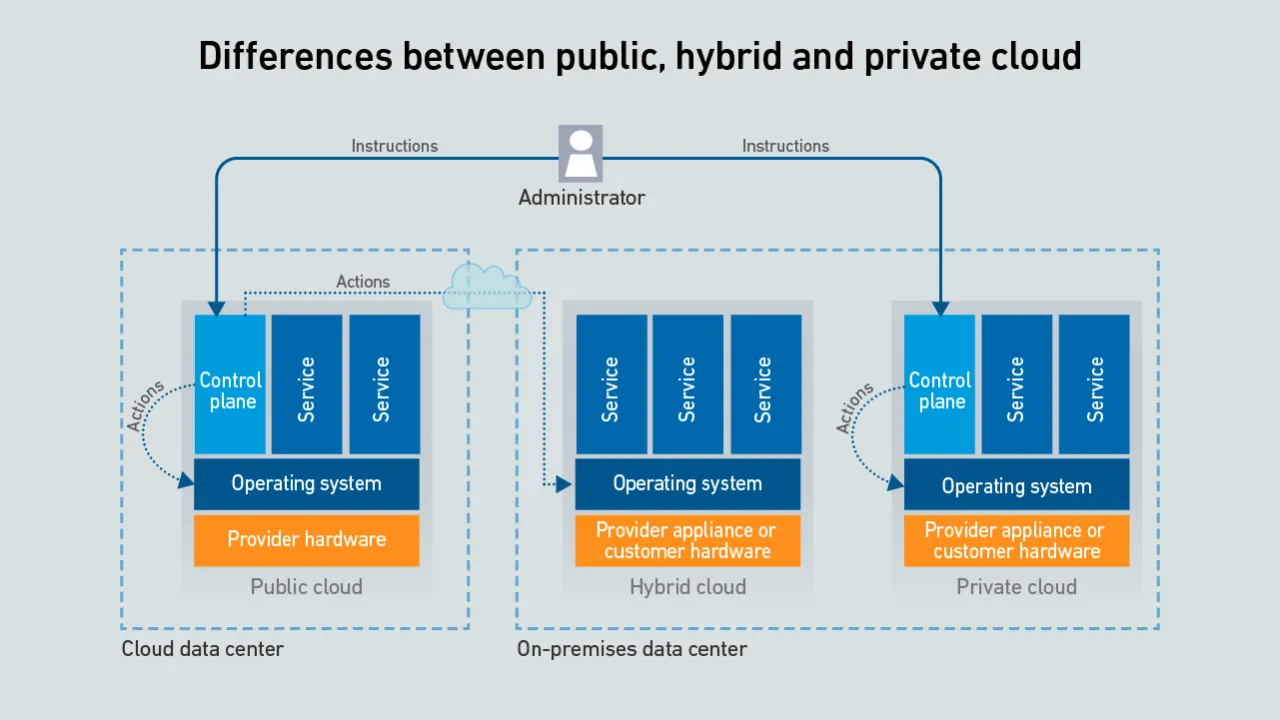

Hyperscalers offer a confusing array of on-premises versions of their public cloud-enabling infrastructure - the differences between them are rooted in whether the customer or the provider manages the control plane and server hardware.