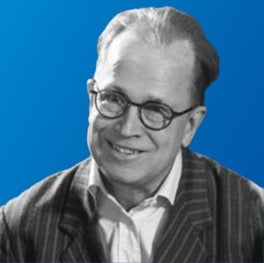

New flagship AMD and Intel servers with high core counts push performance boundaries while improving efficiencies. This report breaks down and visualizes improvements based on an analysis of SERT data.

filters

Explore All Topics

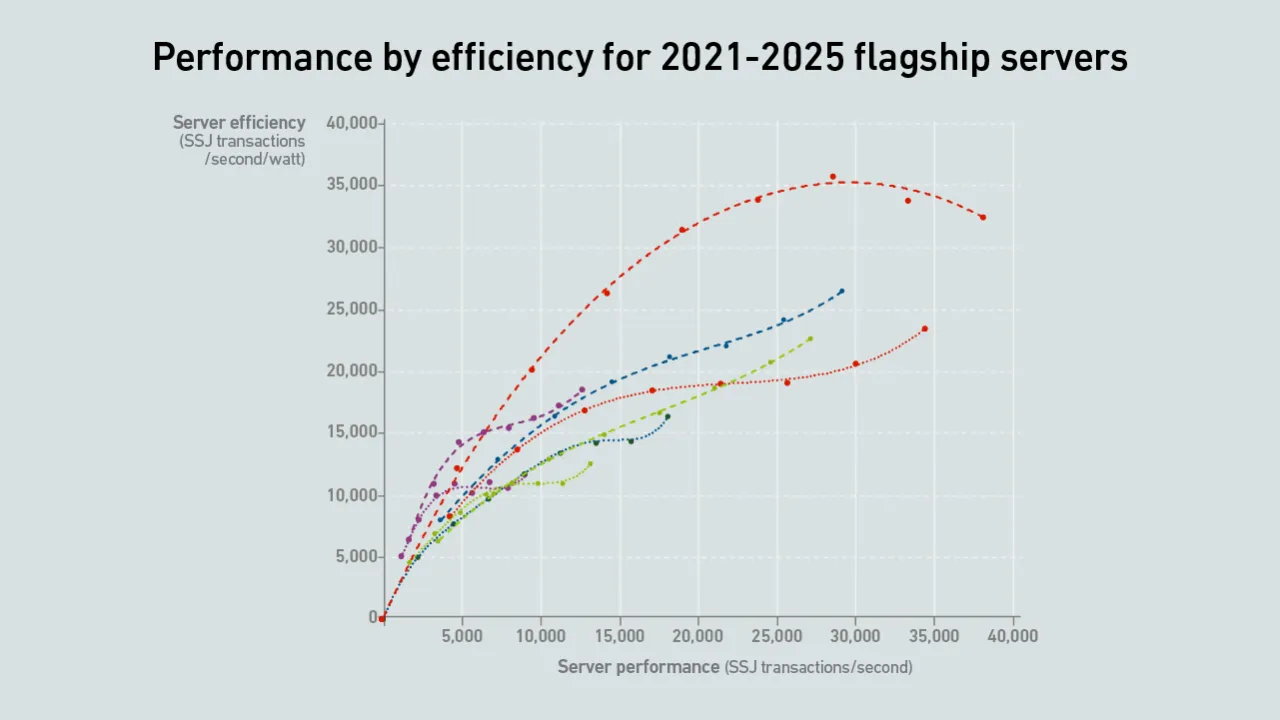

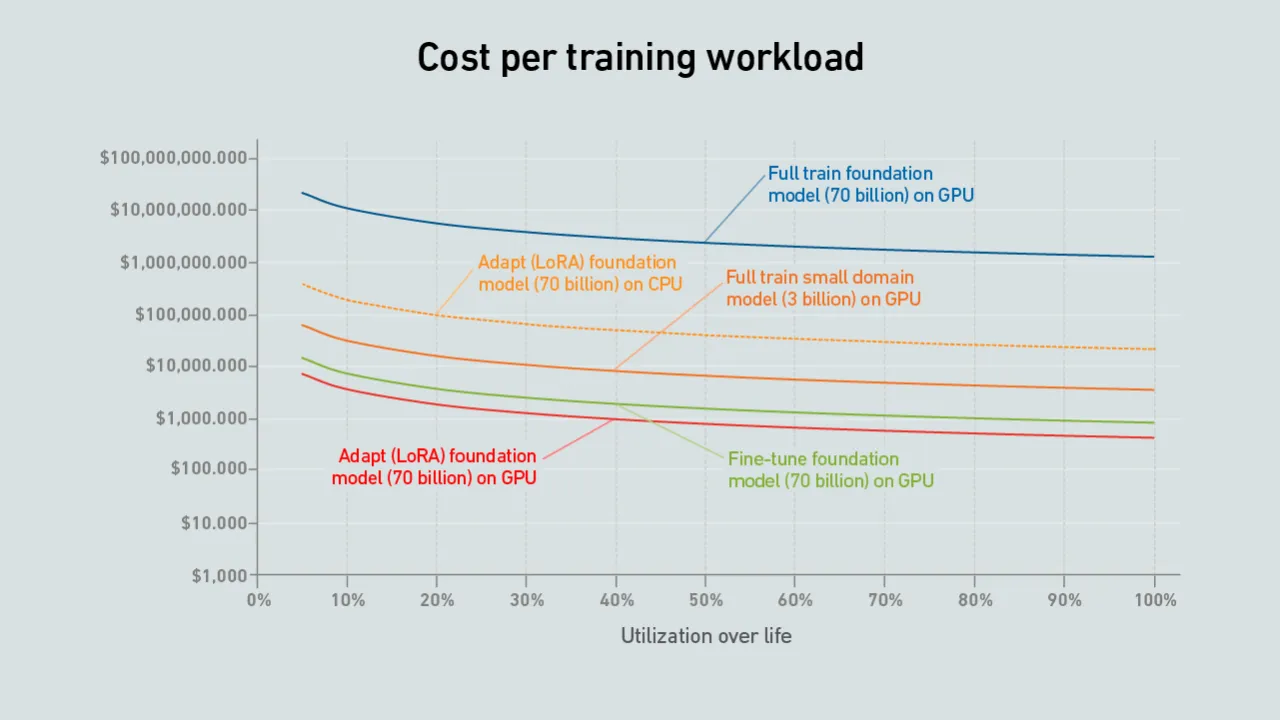

In AI model training, idle GPUs — not high prices — are the biggest driver of cost, with poor utilization quietly burning tens of thousands of dollars in wasted compute capacity.

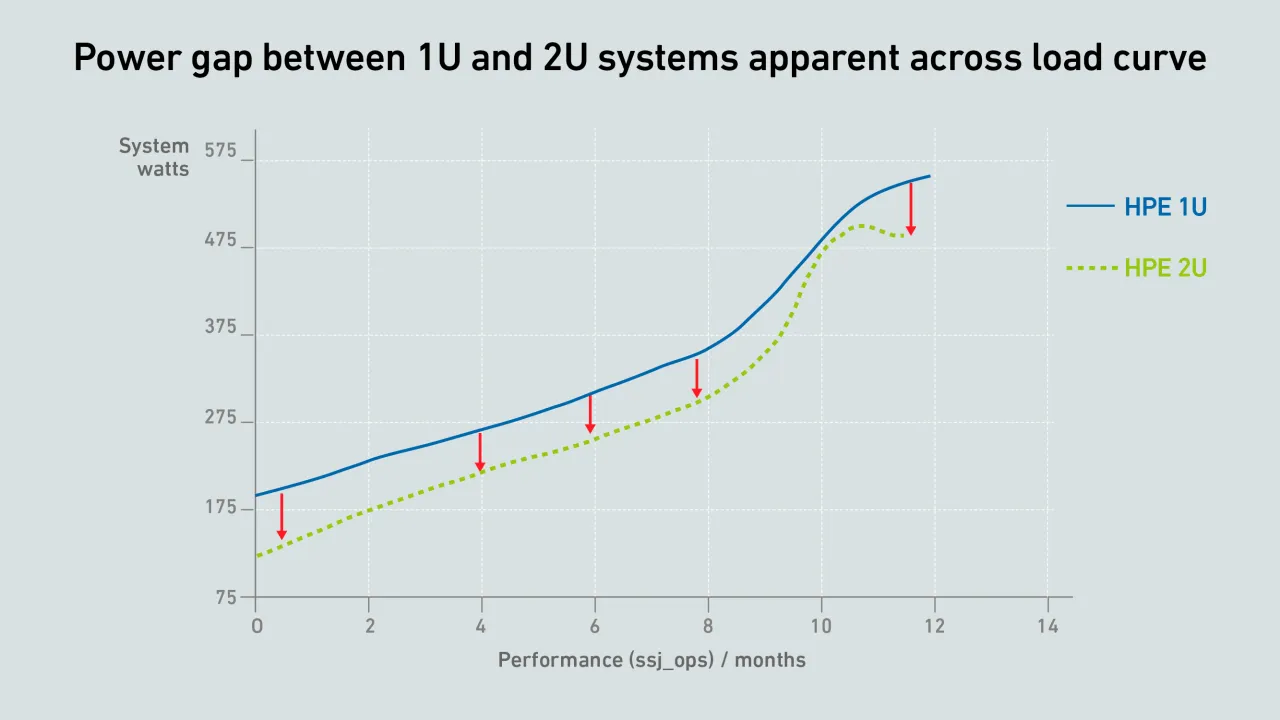

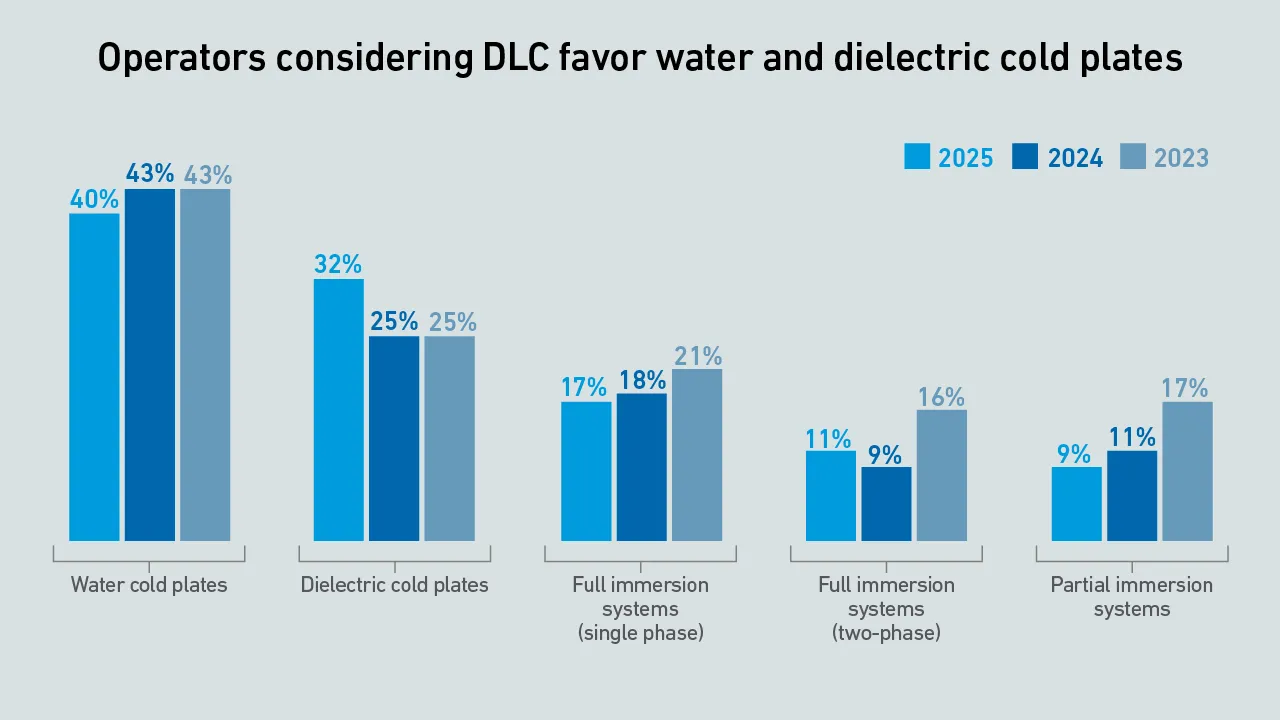

An exclusive focus on densification and DLC (as if they were inevitable) risks becoming tunnel vision that ignores costs and alternative choices. For IT infrastructure not fully transitioning to DLC, keeping densities moderate may make more sense.

When choosing whether to develop a brand new LLM or fine-tune an existing one, the second option often makes more sense. It can be more cost-effective and requires fewer IT and facility resources.

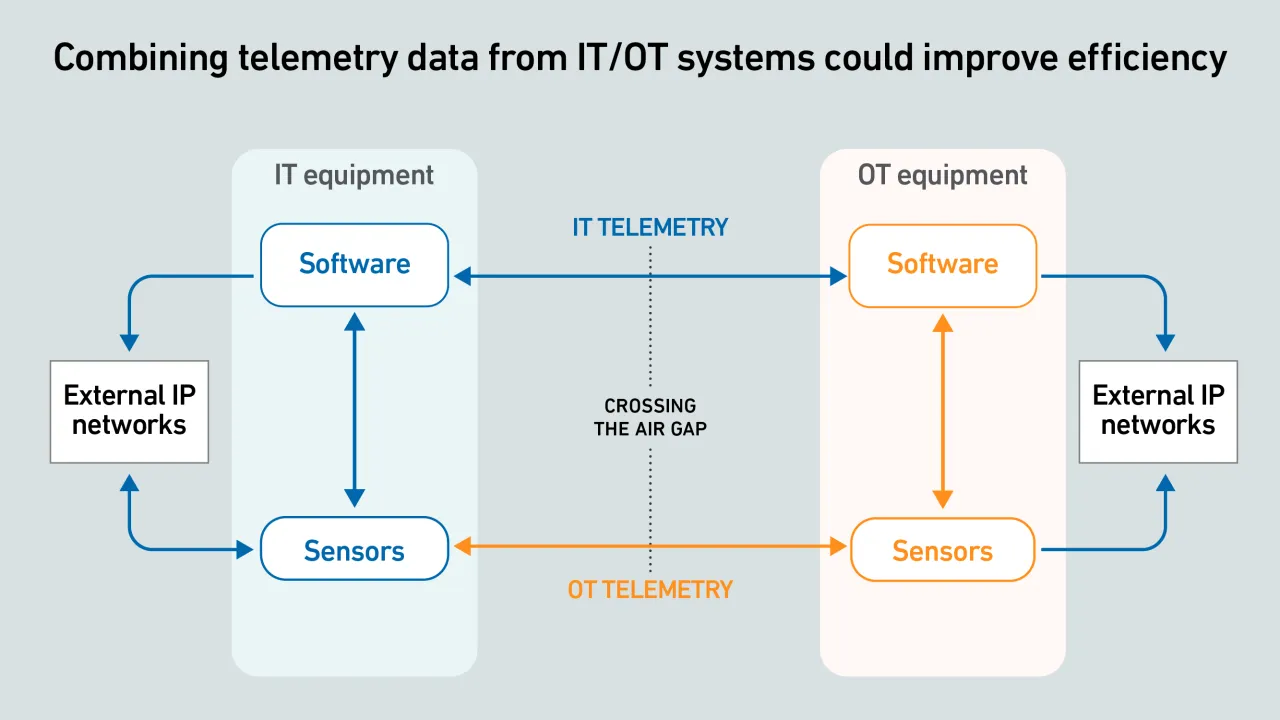

IT-OT equipment telemetry offers huge potential to improve visibility into live facility operations, but data exchange often fails because systems and protocols are incompatible.

While water cold plates continue to dominate current liquid-cooling adoption, the industry has also turned its attention to different approaches, with two-phase cold plates in particular becoming a promising alternative.

Choosing whether to train a model from scratch or fine-tune an existing one comes down to the use case and cost — with hardware utilization remaining an important cost factor.

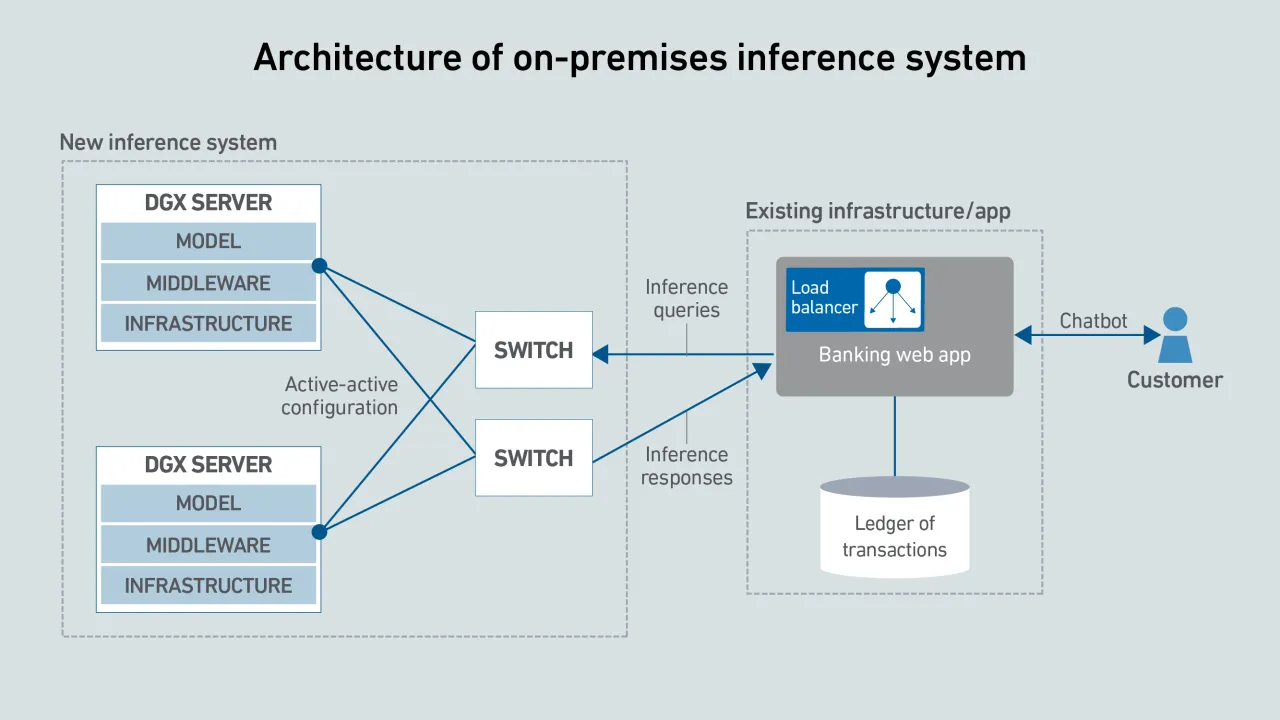

Enterprises deploying AI inference need to choose carefully to limit costs and protect their data.

Although cloud platforms often offer the lowest cost for AI inference, on-premises deployment may be preferable due to application architecture, data locality and control requirements.

The cost of AI inference varies widely depending on deployment model, utilization and hardware. This costing tool compares on-premises, colocation and managed AI platforms on a like-for-like basis.

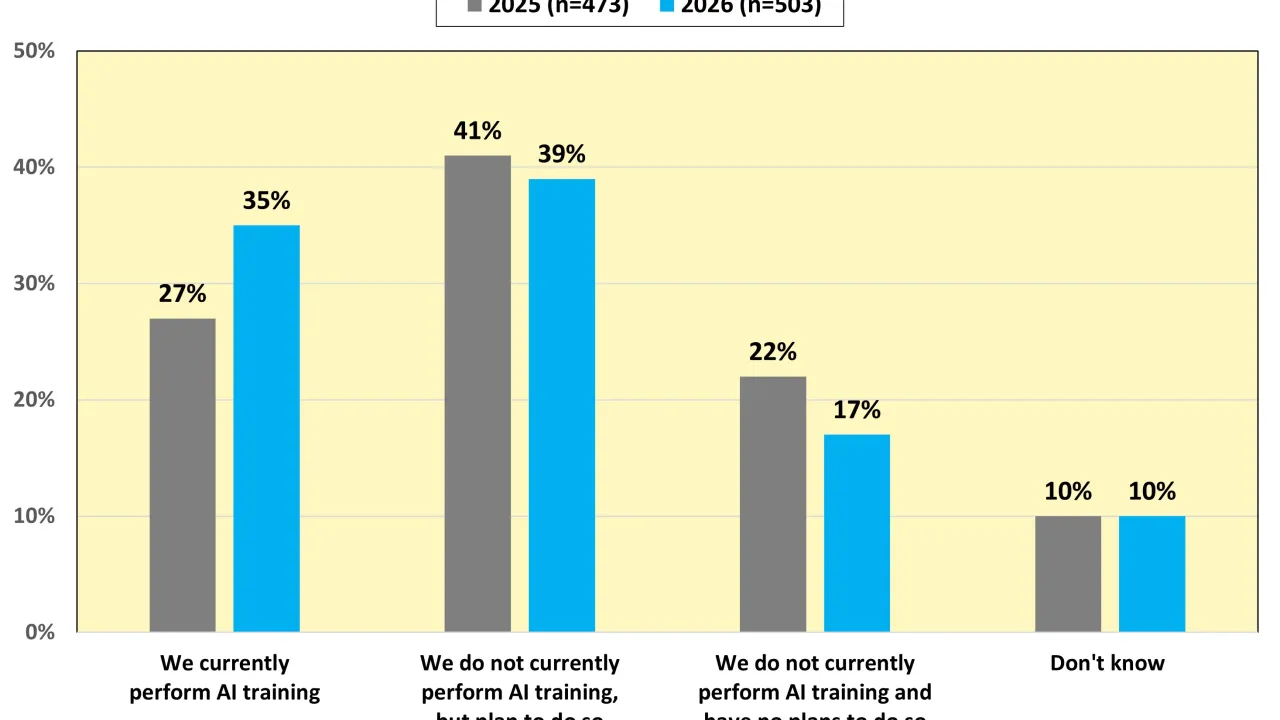

Results from Uptime Institute's 2026 AI Infrastructure Survey (n=1,141) focus on the data center infrastructure currently used or being planned to use to host AI Training and AI Inference, as well as future industry outlooks on the usage of AI. The…

The shortage of DRAM and NAND chips caused by demands of AI data centers is likely to last into 2027, making every server more expensive.

Nvidia CEO Jensen Huang's comment that liquid-cooled AI racks will need no chillers created some turbulence — however, the concept of a chiller-free data center is an old one and is unlikely to suit most operators.

Cybercriminals increasingly target supply chains as entry points for coordinated attacks; however, many vulnerabilities have been overlooked by operators and persist, despite their growing risk and severity.

Data4 needed to test how to build and commission liquid-cooled high-capacity racks before offering them to customers. The operator used a proof-of-concept test to develop an industrialized version, which is now in commercial operation.

Dr. Tomas Rahkonen

Dr. Tomas Rahkonen

Dr. Owen Rogers

Dr. Owen Rogers

Daniel Bizo

Daniel Bizo

Max Smolaks

Max Smolaks

John O'Brien

John O'Brien

Jacqueline Davis

Jacqueline Davis

Paul Carton

Paul Carton

Anthony Sbarra

Anthony Sbarra

Laurie Williams

Laurie Williams

Peter Judge

Peter Judge