Today, GPU designers pursue outright performance over power efficiency. This is a challenge for inference workloads that prize efficient token generation. GPU power management features can help, but require more attention.

filters

Explore All Topics

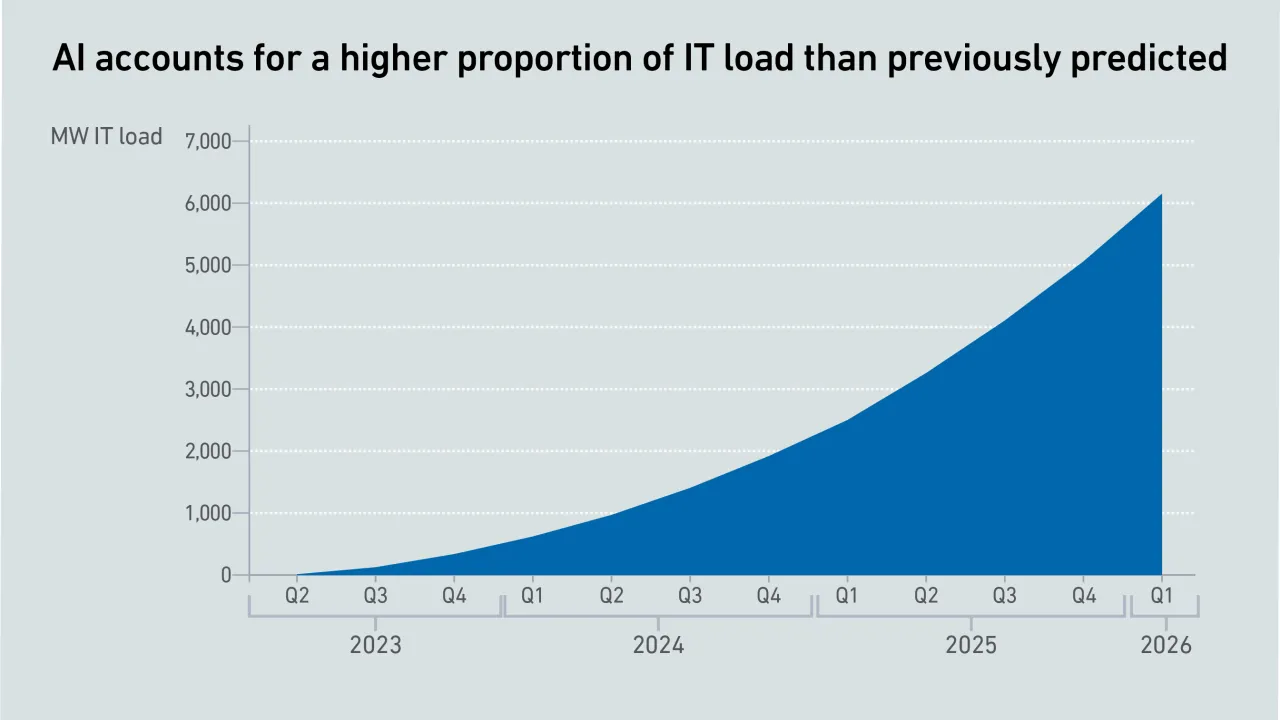

The past year warrants a revision of generative AI power estimates, as GPU shipments have skyrocketed, despite some offsetting factors. However, uncertainty remains high, with no clarity on the viability of these spending levels.

As AI workloads surge, managing cloud costs is becoming more vital than ever, requiring organizations to balance scalability with cost control. This is crucial to prevent runaway spend and ensure AI projects remain viable and profitable.

The US government's AI compute diffusion rules, introduced in January 2025, will be rescinded - with new rules coming. It warns any dealings linked to advanced Chinese chips will require US export authorization. Operators still face tough demands.

In the US, the politicization of data center development is underway, driven by its impact on power prices. As state governments seek ways to protect consumers, operators will need to engage in the policy debate.

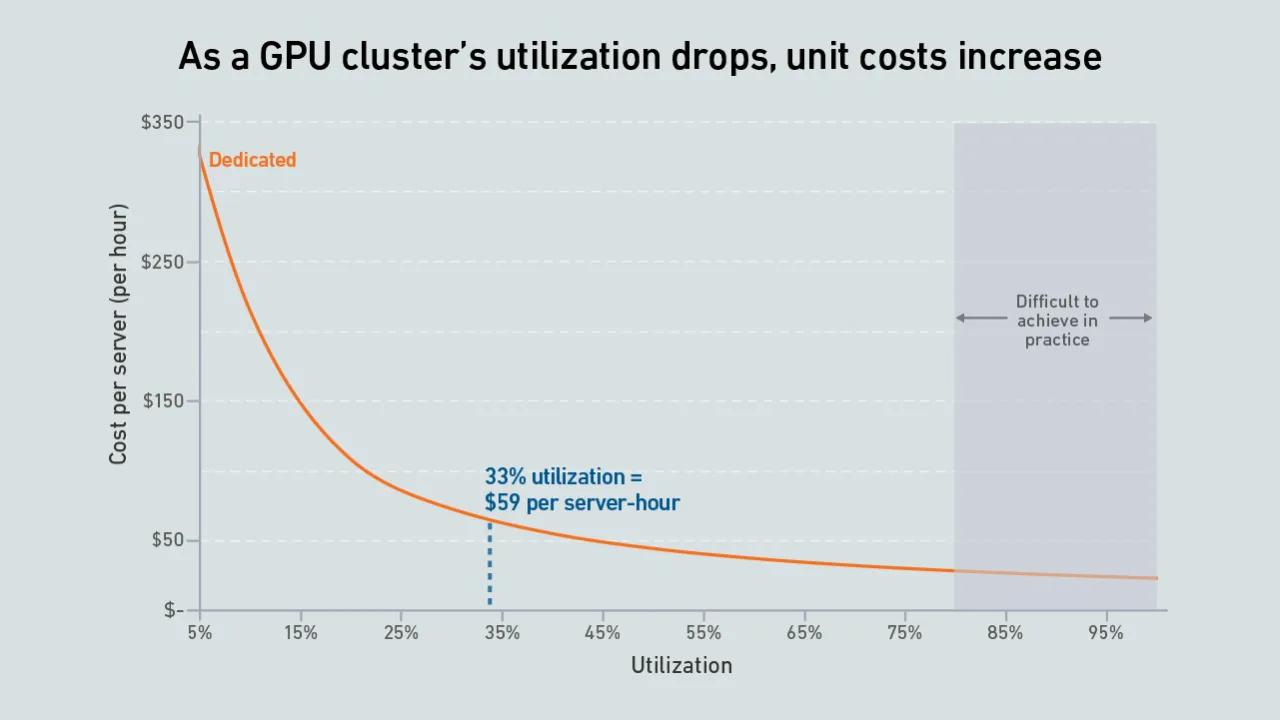

Many operators expect GPUs to be highly utilized, but examples of real-world deployments paint a different picture. Why are expensive compute resources being wasted - and what effect does this have on data center power consumption?

Digital twins are increasingly valued in complex data center applications, such as designing and managing facilities for AI infrastructure. Digitally testing and simulating scenarios can reduce risk and cost, but many challenges remain.

While AI infrastructure build-out may focus on performance today, over time data center operators will need to address efficiency and sustainability concerns.

High-end AI systems receive the bulk of the industry's attention, but organizations looking for the best training infrastructure implementation have choices. Getting it right, however, may take a concerted effort.

The high capital and operating costs of infrastructure for AI mean an outage can have a significant financial impact due to lost training hours

Compared with most traditional data centers, those hosting large AI training workloads require increased attention to dynamic thermal management, including capabilities to handle sudden and substantial load variations effectively.

AI is not a uniform workload - the infrastructure requirements for a particular model depend on a multitude of factors. Systems and silicon designers envision at least three approaches to developing and delivering AI.

Operators and investors are planning to spend hundreds of billions of dollars on supersized sites and vast supporting infrastructures. However, increasing constraints and uncertainties will limit the scale of these build outs.

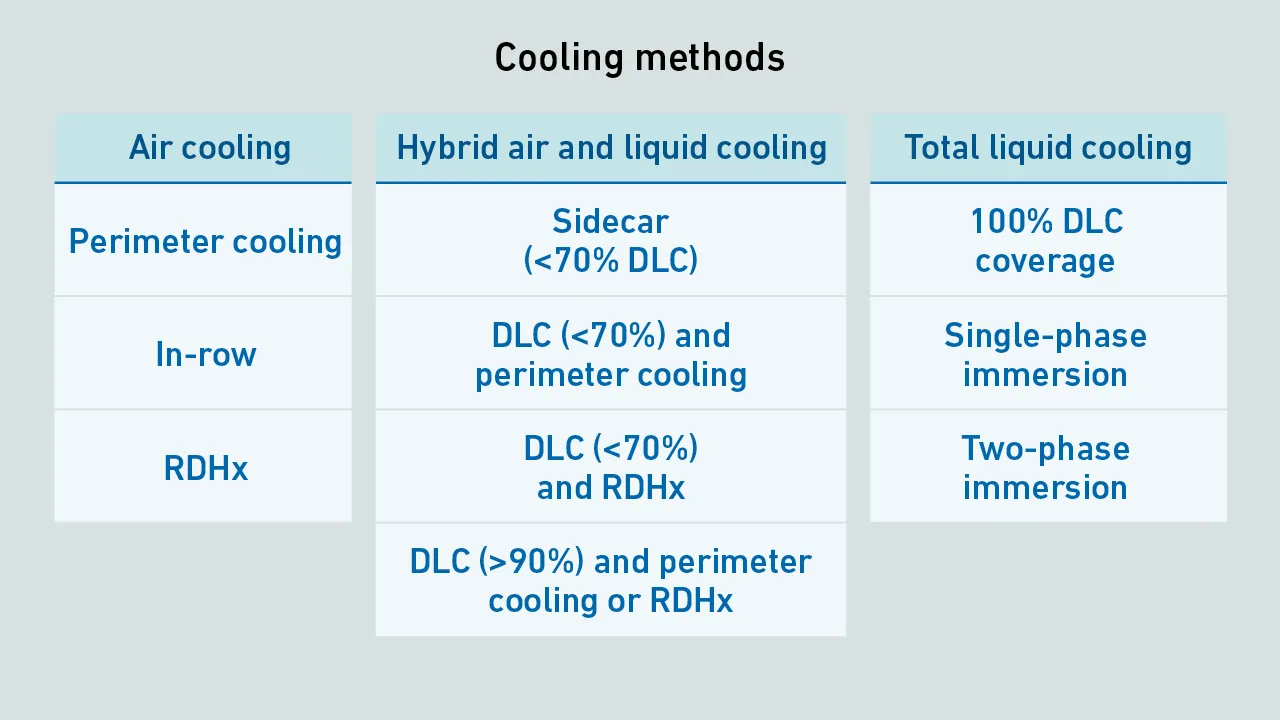

AI infrastructure increases rack power, requiring operators to upgrade IT cooling. While some (typically with rack power up to 50 kW) rely on close-coupled air cooling, others with more demanding AI workloads are adopting hybrid air and DLC.

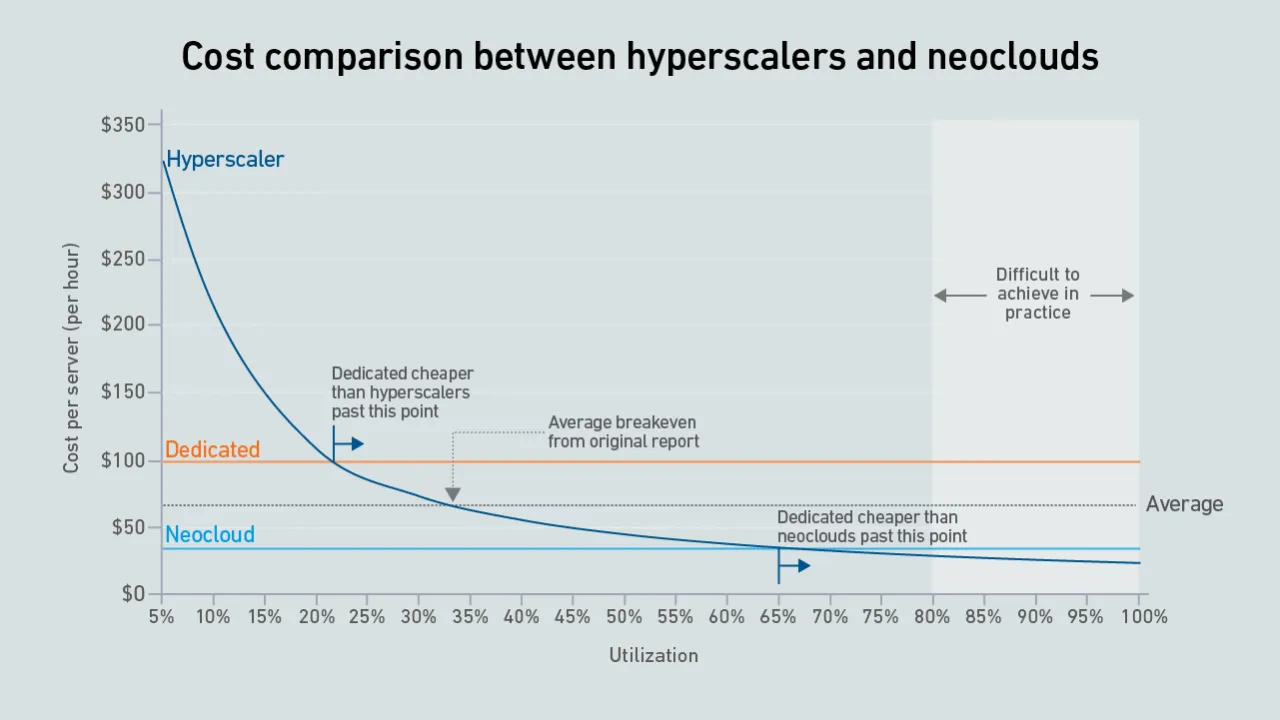

A new wave of GPU-focused cloud providers is offering high-end hardware at prices lower than those charged by hyperscalers. Dedicated infrastructure needs to be highly utilized to outperform these neoclouds on cost.

Max Smolaks

Max Smolaks

Daniel Bizo

Daniel Bizo

Dr. Owen Rogers

Dr. Owen Rogers

Peter Judge

Peter Judge

John O'Brien

John O'Brien

Dr. Tomas Rahkonen

Dr. Tomas Rahkonen