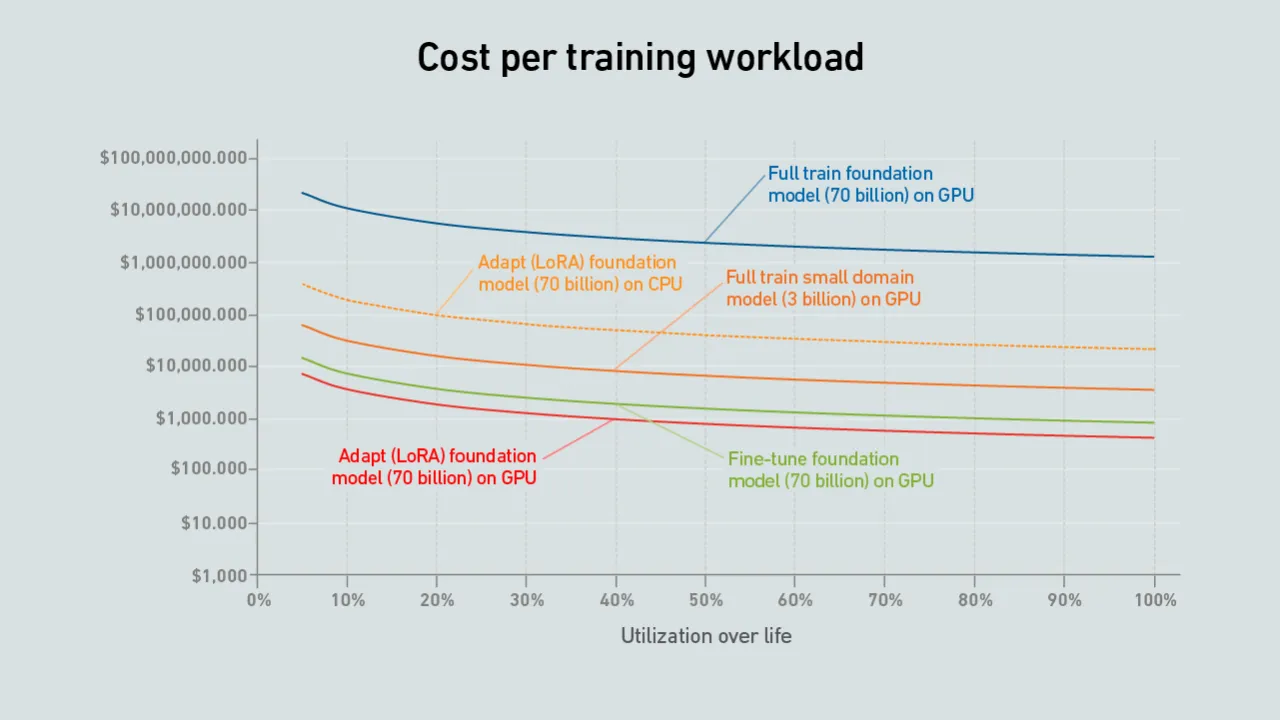

When choosing whether to develop a brand new LLM or fine-tune an existing one, the second option often makes more sense. It can be more cost-effective and requires fewer IT and facility resources.

filters

Explore All Topics

By integrating new natural gas electricity generation with carbon capture, operators can safeguard net-zero targets threatened by a reliance on fossil power — but initial adoption will be costly and limited to specific geographic locations.

AI applications are becoming critical to enterprise operations, but service availability still varies sharply across providers. Inference services should be evaluated not only on model capability, but on operational maturity.

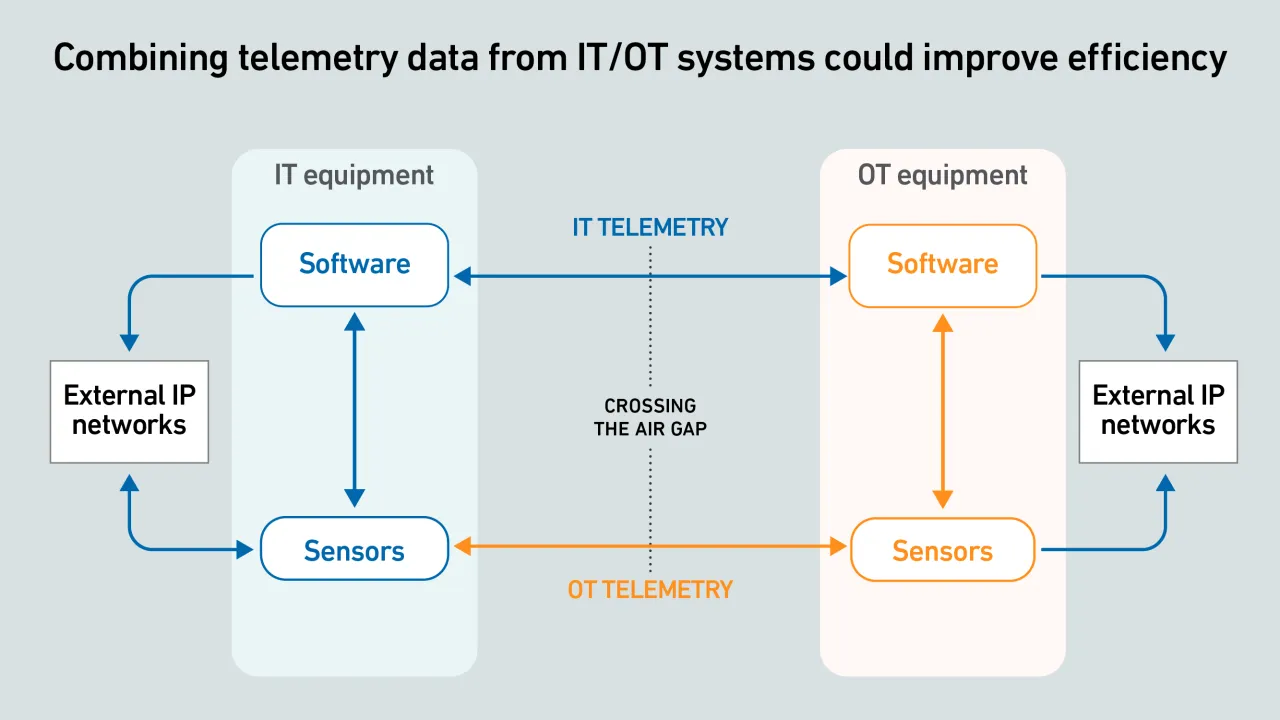

IT-OT equipment telemetry offers huge potential to improve visibility into live facility operations, but data exchange often fails because systems and protocols are incompatible.

Choosing whether to train a model from scratch or fine-tune an existing one comes down to the use case and cost — with hardware utilization remaining an important cost factor.

The topic of direct current (DC) power distribution in data centers is back, and this time it may be different.

Water treatment and chemicals giant Ecolab has agreed to pay $4.75 billion in cash for Canadian DLC specialist CoolIT. It is the latest sign of unabated demand for AI compute.

Growing public opposition to data center development has pushed many data center companies to invest in media and promotional campaigns. But, as opposition intensifies, this type of outreach is unlikely to sway public opinion.

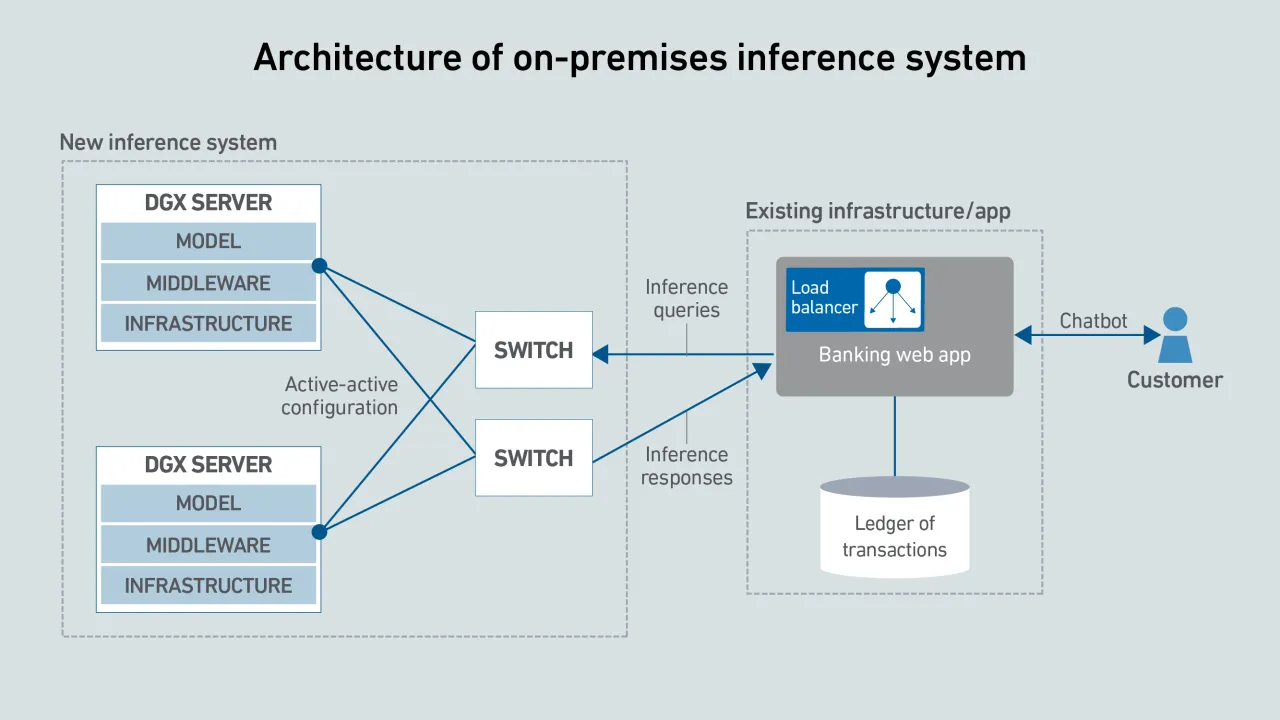

Although cloud platforms often offer the lowest cost for AI inference, on-premises deployment may be preferable due to application architecture, data locality and control requirements.

The cost of AI inference varies widely depending on deployment model, utilization and hardware. This costing tool compares on-premises, colocation and managed AI platforms on a like-for-like basis.

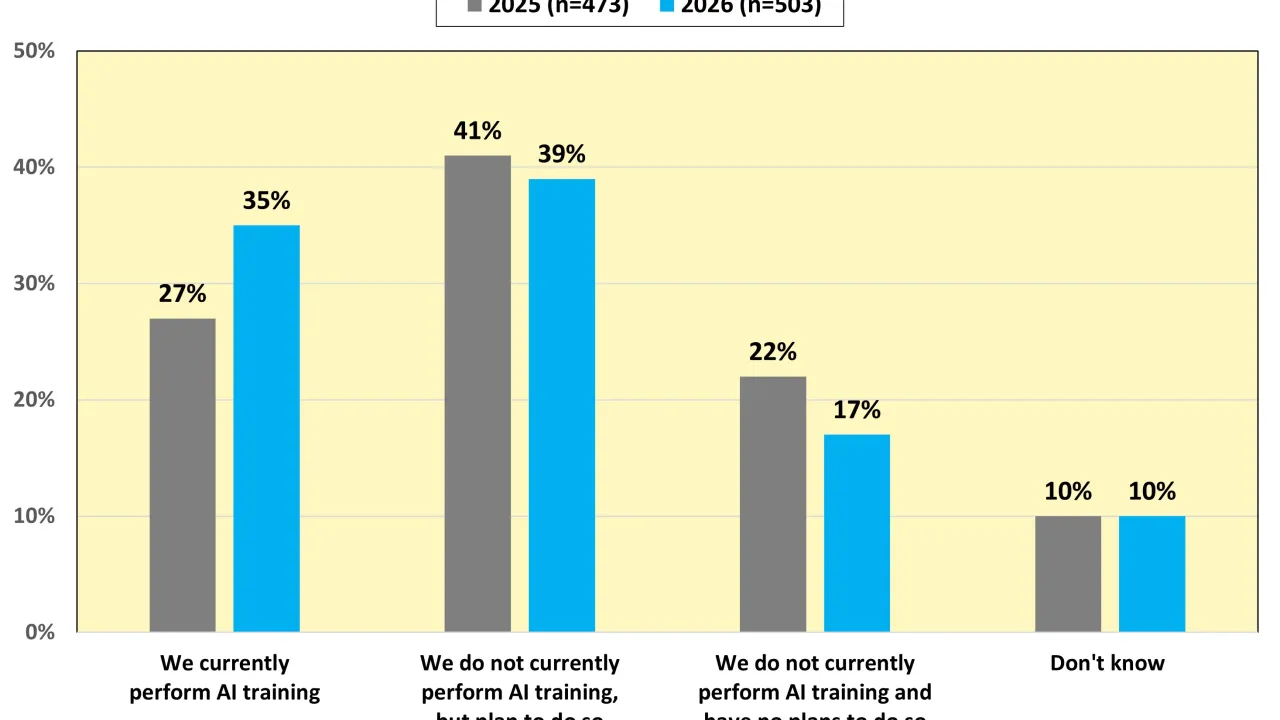

Results from Uptime Institute's 2026 AI Infrastructure Survey (n=1,141) focus on the data center infrastructure currently used or being planned to use to host AI Training and AI Inference, as well as future industry outlooks on the usage of AI. The…

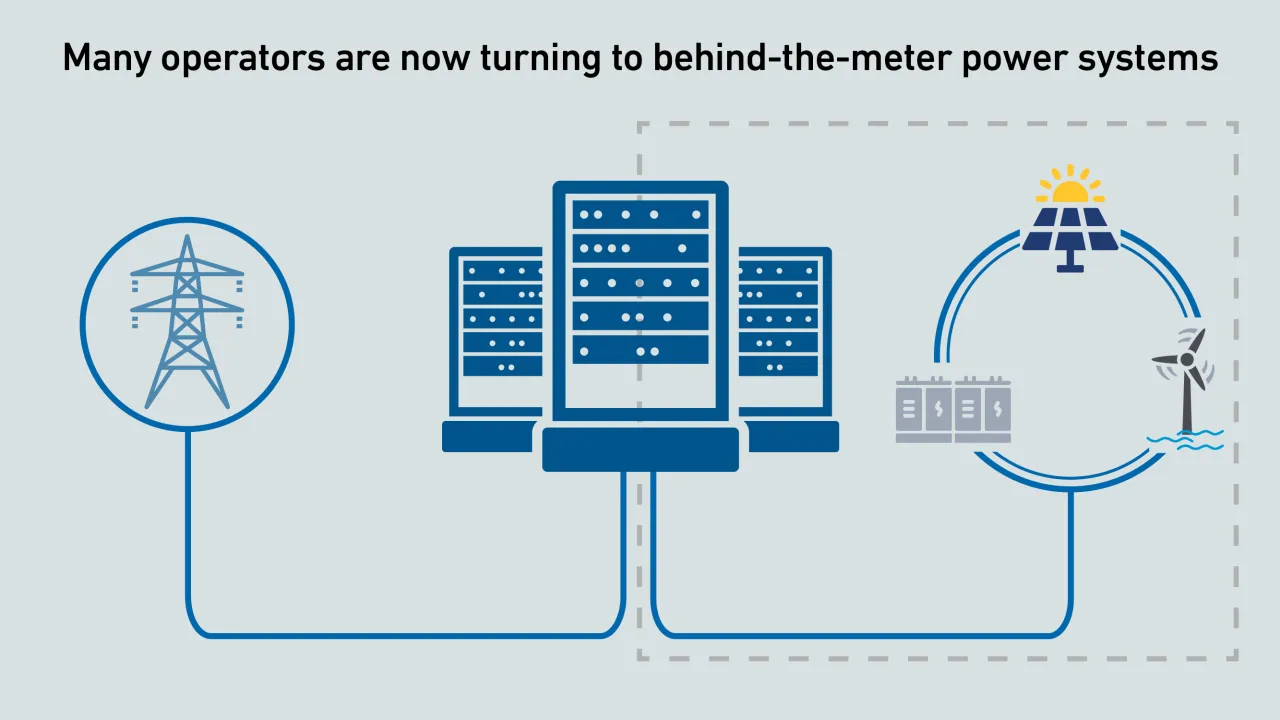

Operators are proposing behind-the-meter power systems to accelerate the buildout of new AI data center infrastructure. Executing this strategy requires regulatory changes in many jurisdictions and new data center design approaches.

As AI adoption spreads, most data centers will not host large training clusters — but many will need to operate specialized systems to run inferencing close to applications.

The shortage of DRAM and NAND chips caused by demands of AI data centers is likely to last into 2027, making every server more expensive.

AI in data center operations is shifting from experimentation to early production use. Adoption remains cautious and bounded, focused on practical automation that supports operators rather than replacing them.

Max Smolaks

Max Smolaks

Peter Judge

Peter Judge

Dr. Owen Rogers

Dr. Owen Rogers

John O'Brien

John O'Brien

Daniel Bizo

Daniel Bizo

Rose Weinschenk

Rose Weinschenk

Paul Carton

Paul Carton

Anthony Sbarra

Anthony Sbarra

Laurie Williams

Laurie Williams

Jay Dietrich

Jay Dietrich

Dr. Rand Talib

Dr. Rand Talib