Water treatment and chemicals giant Ecolab has agreed to pay $4.75 billion in cash for Canadian DLC specialist CoolIT. It is the latest sign of unabated demand for AI compute.

filters

Explore All Topics

Enterprises deploying AI inference need to choose carefully to limit costs and protect their data.

Although cloud platforms often offer the lowest cost for AI inference, on-premises deployment may be preferable due to application architecture, data locality and control requirements.

The cost of AI inference varies widely depending on deployment model, utilization and hardware. This pricing tool compares on-premises, colocation, cloud infrastructure and managed AI platforms on a like-for-like basis.

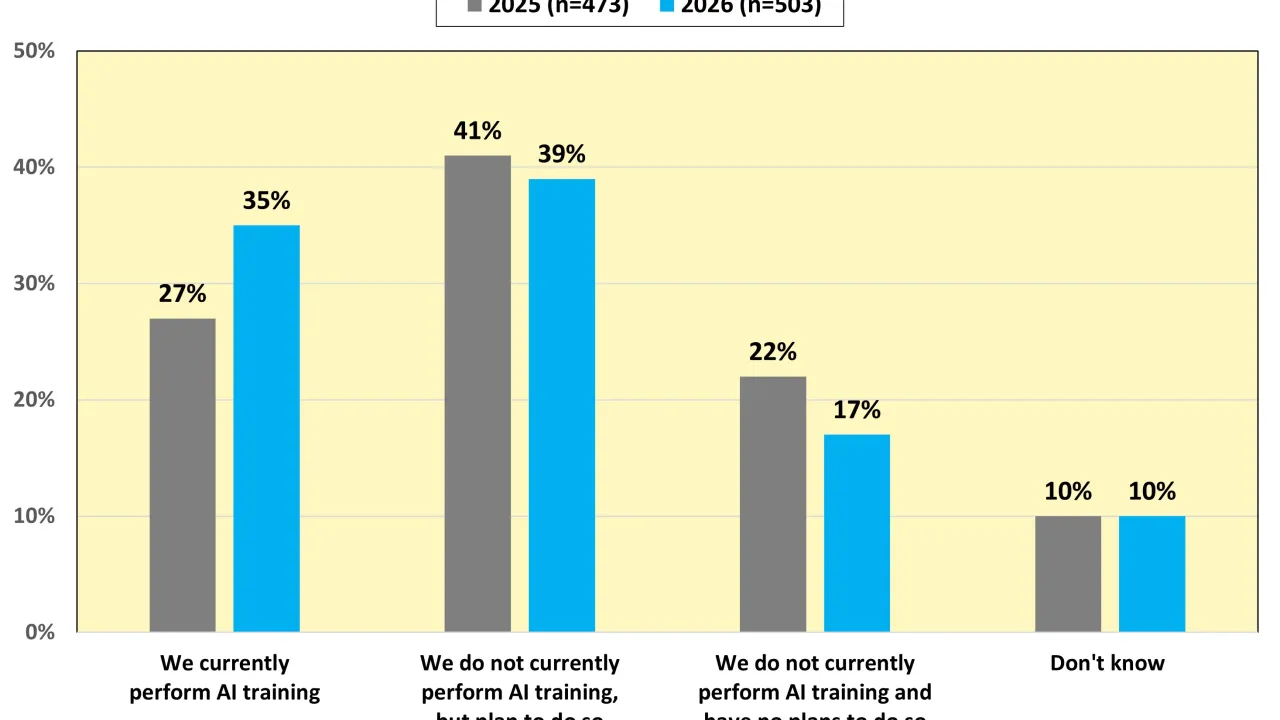

Results from Uptime Institute's 2026 AI Infrastructure Survey (n=1,141) focus on the data center infrastructure currently used or being planned to use to host AI Training and AI Inference, as well as future industry outlooks on the usage of AI. The…

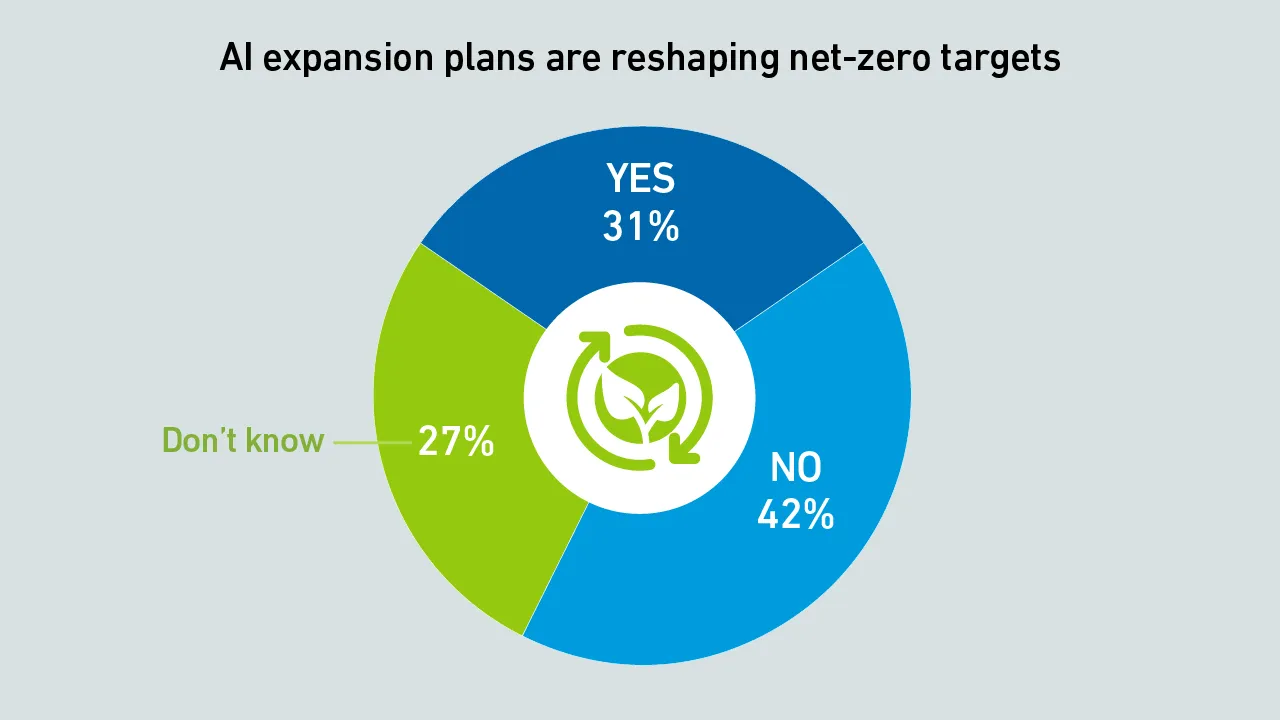

Surging demand for AI data centers is driving a shift to on-site natural gas power, even though operators admit this will delay the achievement of net-zero goals.

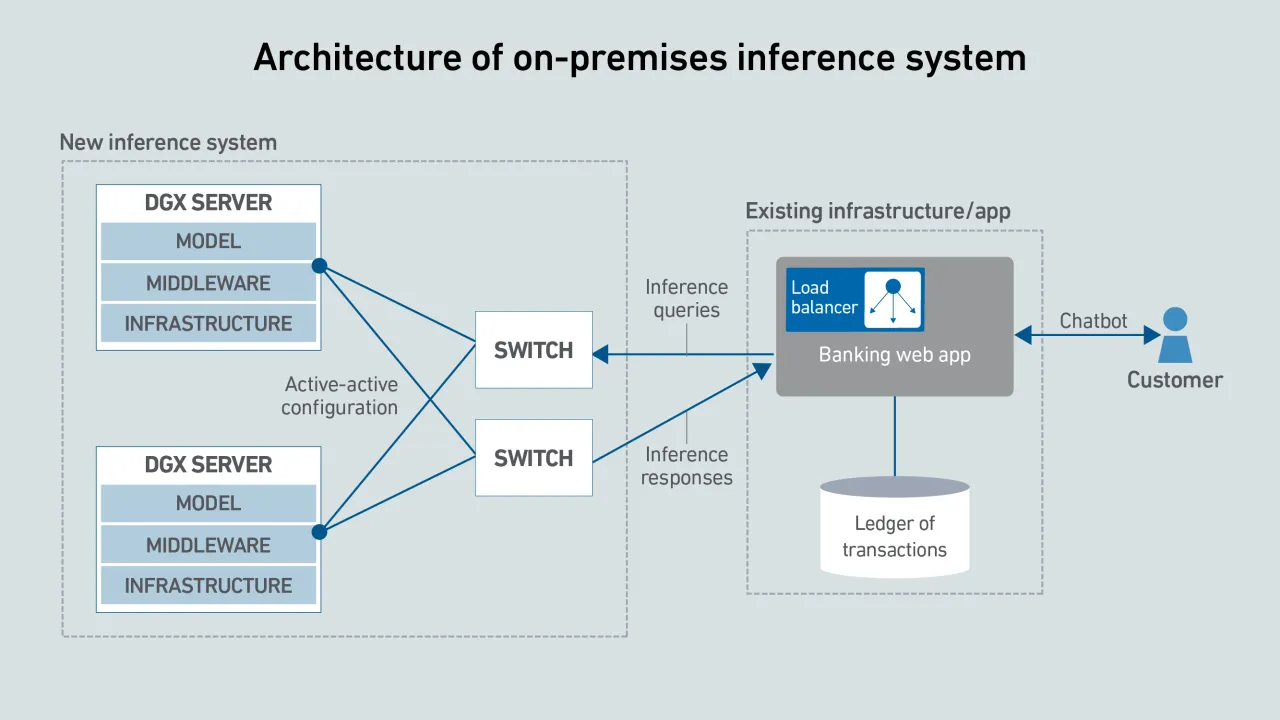

As AI adoption spreads, most data centers will not host large training clusters — but many will need to operate specialized systems to run inferencing close to applications.

Elon Musk's merger of his SpaceX aerospace company with his AI firm xAI has relit the thrusters under the concept of building big data centers in space. However, the technical difficulties involved may ultimately thwart his ambitions.

In 2026, enterprises will be more realistic about their use of generative AI, prioritizing simple use cases that deliver clear, timely value over those more innovative projects where returns — and successful outcomes — are less assured.

Investment in large-scale AI has accelerated the development of electrical equipment, which creates opportunities for data center designers and operators to rethink power architectures.

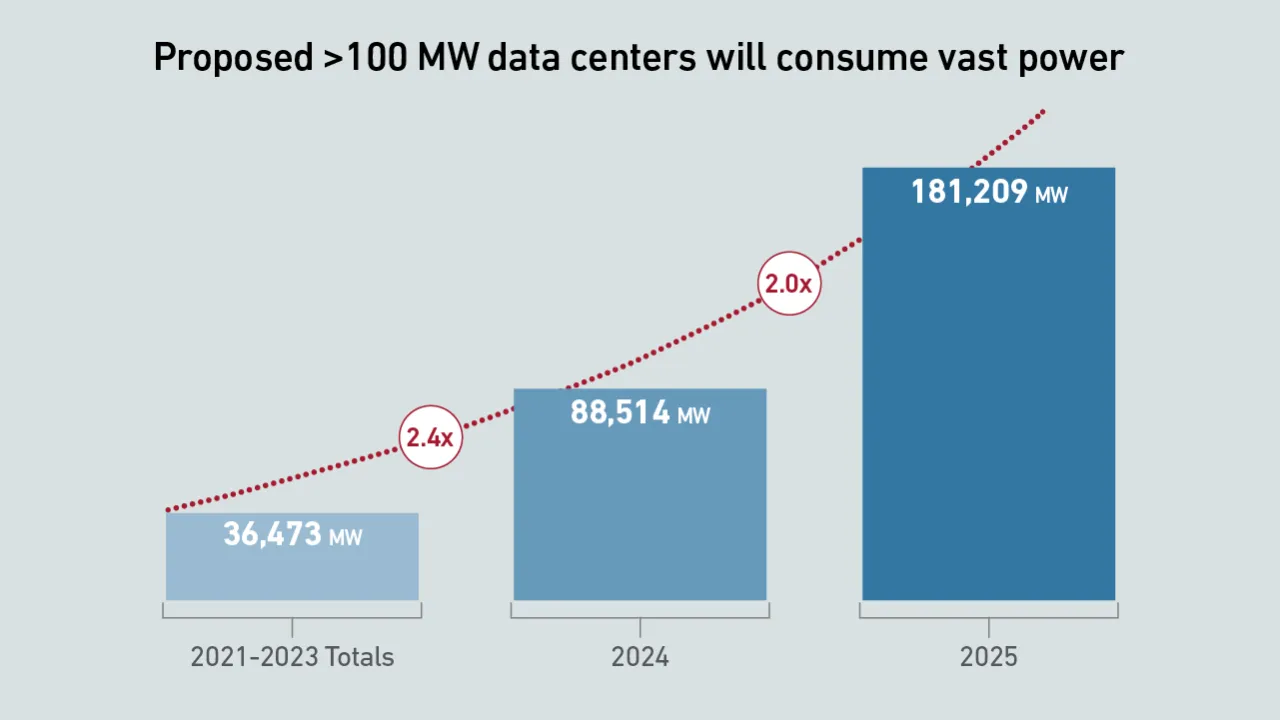

Data from Uptime Intelligence's giant data center analysis indicates that proposed power capacity and investment tied to giant data centers and campuses are at unprecedented levels.

DLC was developed to handle high heat loads from densified IT. True mainstream DLC adoption remains elusive; it still awaits design refinements to address outstanding operational issues for mission-critical applications.

Uptime Intelligence looks beyond the more obvious trends of 2026 and examines some of the latest developments and challenges shaping the data center industry.

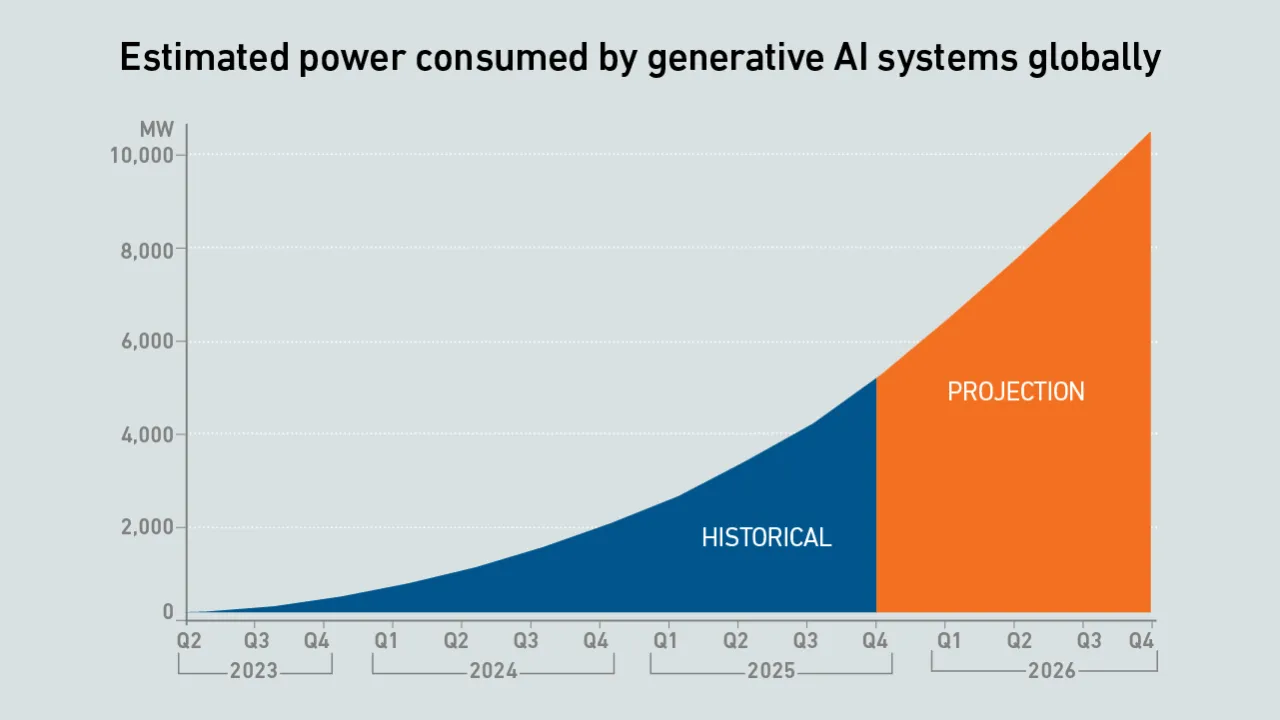

The updated model projects a doubling of power consumption by the end of 2026, with IT loads serving generative AI workloads breaking through 10 GW of capacity.

Financial institutions are embracing public cloud for some mission-critical workloads, and using it as a launchpad for AI development.

Daniel Bizo

Daniel Bizo

Max Smolaks

Max Smolaks

Dr. Owen Rogers

Dr. Owen Rogers

Paul Carton

Paul Carton

Anthony Sbarra

Anthony Sbarra

Laurie Williams

Laurie Williams

Peter Judge

Peter Judge

Andy Lawrence

Andy Lawrence

John O'Brien

John O'Brien

Jacqueline Davis

Jacqueline Davis

Jay Dietrich

Jay Dietrich

Douglas Donnellan

Douglas Donnellan

Dr. Rand Talib

Dr. Rand Talib